Most Reliable Citation Analysis for AI Search Engines

Discover the most reliable citation analysis for AI search engines. Enhance accuracy, improve ranking, and build trust in your AI models for 2026.

Subtitle: A research standard for separating signal from noise in AI visibility measurement

Date: April 28, 2026

Chapter 2

The counterintuitive truth is simple. More citations do not automatically produce more reliable measurement.

A large review of 40,000 AI search engine responses found that Perplexity averages 6.61 citations per answer, Google Gemini 6.1, and ChatGPT 2.62, which means any team using a single-engine snapshot is observing platform behavior, not market reality, according to this analysis of AI source selection patterns. The reliability problem starts there. Citation counts vary by engine before a brand has even changed a page.

Organizations often still evaluate AI visibility with SEO habits. They pull one prompt, one answer, one day, and one dashboard. That approach fails because AI search is probabilistic, retrieval-heavy, and unstable at the response layer. Brands think they're measuring performance. In practice, they're often measuring output variance.

That is why the most reliable citation analysis for ai search engines isn't a feature checklist. It's a methodology question. Teams need repeatable execution, evidence preservation, and cross-engine comparison. For readers looking for a practical companion on the business effects, Surnex has a useful explainer on how AI search impacts citations that helps frame why this issue now belongs in core visibility reporting.

Table of Contents

Executive Summary The Citation Reliability Crisis

Reliability fails when teams measure snapshots

The market is too fragmented for single engine reporting

A New Framework for Citation Reliability

Evidence Clusters define whether a citation can be trusted

Semantic Density determines whether a page is easy to retrieve

Cross-Engine Stability is the governing metric

Comparing Citation Analysis Methodologies

Method matters more than dashboard design

Comparison of Citation Analysis Methodologies

Why headless browser evidence wins

Why Fan-Out Queries Redefine Citation Opportunity

AI engines decompose prompts before they cite

Fan-out breadth changes what visibility means

Tactical Implementation for Enterprise GEO

Enterprise programs need a controlled testing loop

Intent changes source eligibility

Operationalize citation analysis into workflow

Measurement Governance and The Future of Truth

Citation analysis now belongs in governance

Truth programs need independent verification

The strategic conclusion is straightforward

Executive Summary The Citation Reliability Crisis

Reliable citation analysis starts by rejecting the dashboard illusion. AI answers are variable outputs, so single-run reporting doesn't measure durable visibility.

The problem isn't that marketers lack tools. The problem is that most tools inherit assumptions from rank tracking. Traditional SEO platforms were built to observe stable positions on a page. AI systems generate responses from retrieval, synthesis, and model preference layers that shift by prompt wording, model, and context. That means the same brand can appear authoritative in one run and disappear in the next without any underlying change in market position.

Reliability fails when teams measure snapshots

A citation metric becomes useful only when it survives repetition. The source analysis cited above compared 40,000 responses and showed large differences in average citation volume between Perplexity, Gemini, and ChatGPT, which is exactly why reliability can't be inferred from one engine or one run of a prompt. A stable metric has to account for variance, preserve the full answer, and make it possible to recheck what the system produced.

Practical rule: If a platform can't show the exact answer and cited URLs that a user saw, it hasn't produced evidence. It has produced an interpretation.

That distinction matters because citation reporting now influences budget, content direction, PR targeting, and executive perception of AI readiness. Once a board or CMO starts treating an unstable metric as truth, the organization begins optimizing toward noise.

The market is too fragmented for single engine reporting

Perplexity, Gemini, and ChatGPT don't cite in the same way, and they don't expose source behavior in the same pattern. A brand that looks strong in Perplexity can remain weak in ChatGPT. A domain that dominates one answer type can disappear when the model shifts source preference. Reliability therefore requires comparison across engines, not confidence within one product.

A strong program evaluates at least three layers:

Prompt repeatability: The same prompt must be sampled repeatedly to see whether a citation recurs.

Engine diversity: ChatGPT, Gemini, and Perplexity must be treated as separate retrieval ecosystems.

Evidence retention: Teams need the answer text, source links, and timing preserved for later review.

The executive implication is blunt. Citation measurement is no longer a reporting accessory. It's an evidence system for AI perception.

Without that standard, brands aren't validating visibility. They're narrating it.

,

For readers who need the foundational chapter on first principles before operational rollout, return to Chapter 1 through the broader research library. Teams that want a formal assessment of citation reliability in their own category can book a call with Algomizer at algomizer.com.

A New Framework for Citation Reliability

Reliable citation analysis requires engineering criteria, not marketing claims. The strongest programs judge evidence quality, retrieval fit, and consistency across model environments.

Most vendors describe coverage. Few define reliability. That gap is why a better standard needs first-principles language. Three terms make the problem measurable: Evidence Clusters, Semantic Density, and Cross-Engine Stability.

A deeper technical companion to this model appears in Algomizer's work on engineering truth through a technical GEO framework.

Evidence Clusters define whether a citation can be trusted

AI systems don't retrieve pages as isolated objects. They infer confidence through groups of corroborating sources, overlapping entities, and answer-ready passages. An Evidence Cluster is the surrounding source environment that makes one citation repeatable enough to trust.

In practice, teams should ask:

Does the cited page sit inside a corroborated topic cluster? A lone page with weak surrounding support is fragile.

Do multiple authoritative domains reinforce the same fact pattern? If they do, the citation has structural support.

Can the answer be re-observed in a later run? If not, the initial citation may have been incidental.

A tool that reports one cited URL without the surrounding source context is missing the mechanism that produced the citation.

Semantic Density determines whether a page is easy to retrieve

A page becomes citation-eligible when it compresses verifiable information into extractable form. That is Semantic Density. It isn't a writing style preference. It's a retrieval condition.

High-density pages usually do three things well. They answer specific questions directly, they maintain clear entity relationships, and they package facts in a way the model can lift without reconstruction. Low-density pages force the model to infer too much, which increases retrieval risk and reduces citation probability.

Citation reliability improves when content reduces model effort. AI systems prefer pages that are easy to verify, easy to segment, and easy to restate.

Cross-Engine Stability is the governing metric

The most useful metric is not total citations. It is whether a source persists across engines and reruns. Cross-Engine Stability measures that persistence qualitatively. If a page appears in one product only, and only intermittently, it may still matter tactically. But it should not be mistaken for durable authority.

Many reporting systems fail in a key respect. They aggregate mention volume while hiding instability. A more rigorous standard asks whether the same source survives changes in model, region, and prompt formulation. That is the threshold between observation and evidence.

The framework changes what teams should buy and what they should ignore. Reliability isn't a prettier dashboard. It's proof that the citation happened under conditions that matter.

,

Readers comparing framework design with implementation should revisit Chapter 1 for the broader context. Organizations that want this standard applied to their own prompt library can book a call with Algomizer at algomizer.com.

Comparing Citation Analysis Methodologies

The method used to capture AI answers determines whether citation data is defensible. Interface polish matters less than evidentiary fidelity.

Most vendors present methodology as a technical detail. It isn't. It is the difference between observed output and inferred output. That matters especially on Perplexity, where source-attributed links are abundant, and on ChatGPT, where inline mentions can be thinner and harder to verify. Benchmark data summarized by Omnia notes that Perplexity averages 5-8 citations per response while ChatGPT shows 2-4 inline mentions, making Perplexity particularly useful for visibility tracking through its overview of citation analysis options.

Method matters more than dashboard design

Three collection methods dominate the market. API-based tracking pulls model-accessible outputs directly. Public-facing scraping reads rendered interfaces or public result layers. Headless browser emulation reproduces what an actual user sees in the product environment.

The trade-off isn't subtle. API collection is efficient but can diverge from the live user interface. Public scraping captures some surface evidence but often misses session context and interaction effects. Headless browser collection is heavier operationally, but it preserves the generated answer as experienced by the user.

For teams that still think backlink tooling logic can be repurposed, NameSnag's review of backlink analysis tools is a helpful contrast. Backlink systems inspect a relatively stable web graph. Citation systems must inspect unstable generated outputs.

Comparison of Citation Analysis Methodologies

Methodology | Verifiability | Cross-Engine Stability | Cost & Scalability | Best For |

|---|---|---|---|---|

API-based tracking | Moderate. Fast, but may reflect filtered or non-UI output | Moderate when APIs are consistent, weaker when products diverge from the live interface | Strong scalability, lower operational burden | Early monitoring, broad directional reporting |

Public-facing search scraping | Limited. Often captures result surfaces rather than full answer states | Limited, because rendered layers can change and personalization can distort repeatability | Moderate cost, uneven maintenance burden | Lightweight checks, competitive observation |

Headless browser emulation | High. Captures the actual response environment and source evidence | High, because the same execution logic can be applied across engines and reruns | Higher operational complexity, stronger evidence quality | Enterprise measurement, auditability, governance |

Why headless browser evidence wins

The strongest methodology is the one that preserves what users saw. That is why headless browser emulation remains the most defensible approach for enterprise reporting. It creates inspectable evidence, supports replayable workflows, and limits the hidden filtering that often appears in lighter methods.

A citation report becomes credible when legal, PR, and search teams can all inspect the same captured answer and agree on what happened.

This also explains why simplistic vendor comparisons are often misleading. Tool lists compare features. Reliability comparisons judge method. Those are not the same exercise.

,

Readers wanting the first-principles lens behind this comparison should return to Chapter 1. Teams that need independently verifiable citation capture across live environments can book a call with Algomizer at algomizer.com.

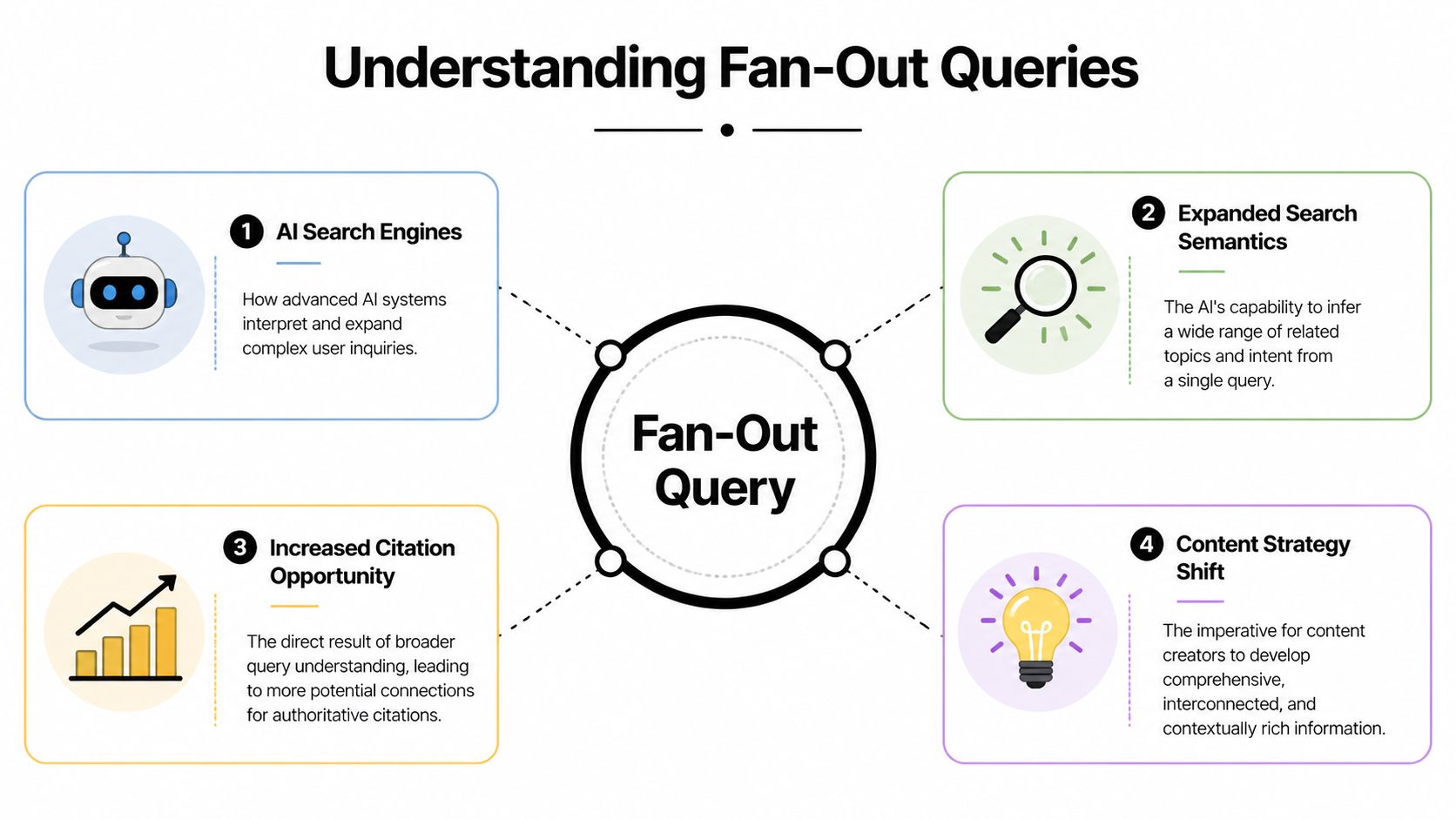

Why Fan-Out Queries Redefine Citation Opportunity

Fan-out queries change the unit of analysis. AI engines don't just answer the visible prompt. They expand it into hidden retrieval paths.

The practical consequence is severe. A page can lose citations even when it performs well for the apparent head term, because the engine sourced supporting evidence from adjacent semantic branches instead.

AI engines decompose prompts before they cite

Search Engine Land reported that an analysis of 10,000 keywords found pages ranking across Google AI Overview fan-out queries were 161% more likely to be cited than pages ranking only for the main query, and those pages accounted for 51% of all AI Overview citations, according to its reporting on fan-out query citation dynamics.

That statistic rewrites the optimization brief. Citation opportunity doesn't sit at the head keyword alone. It sits in the model's decomposition layer, where the system breaks a broad user request into narrower evidence needs.

Readers who want the mechanics of answer-engine optimization at the system level can pair this with Algomizer's explainer on how GEO works.

Fan-out breadth changes what visibility means

A reliable citation analysis program therefore has to monitor semantic breadth, not just prompt outcomes. The useful question becomes: which supporting subtopics does the engine appear to retrieve when constructing an answer?

That changes tactical judgment in at least three ways:

Primary pages aren't enough: A category page may rank well and still lose citations to supporting documents elsewhere.

Topic clusters beat isolated assets: Supporting explainers, comparisons, and entity pages can create the retrieval paths a model uses.

SERP intuition breaks down: Citation opportunity often lives outside the obvious ranking surface.

The hidden query path is often more valuable than the visible prompt, because it reveals what the model needed in order to trust the answer.

Shallow reporting often overlooks the underlying mechanism. A dashboard may show that a competitor was cited. Only a fan-out analysis explains why.

,

For teams mapping semantic coverage before production rollout, Chapter 1 remains the right precursor. Brands that want a fan-out driven GEO assessment can book a call with Algomizer at algomizer.com.

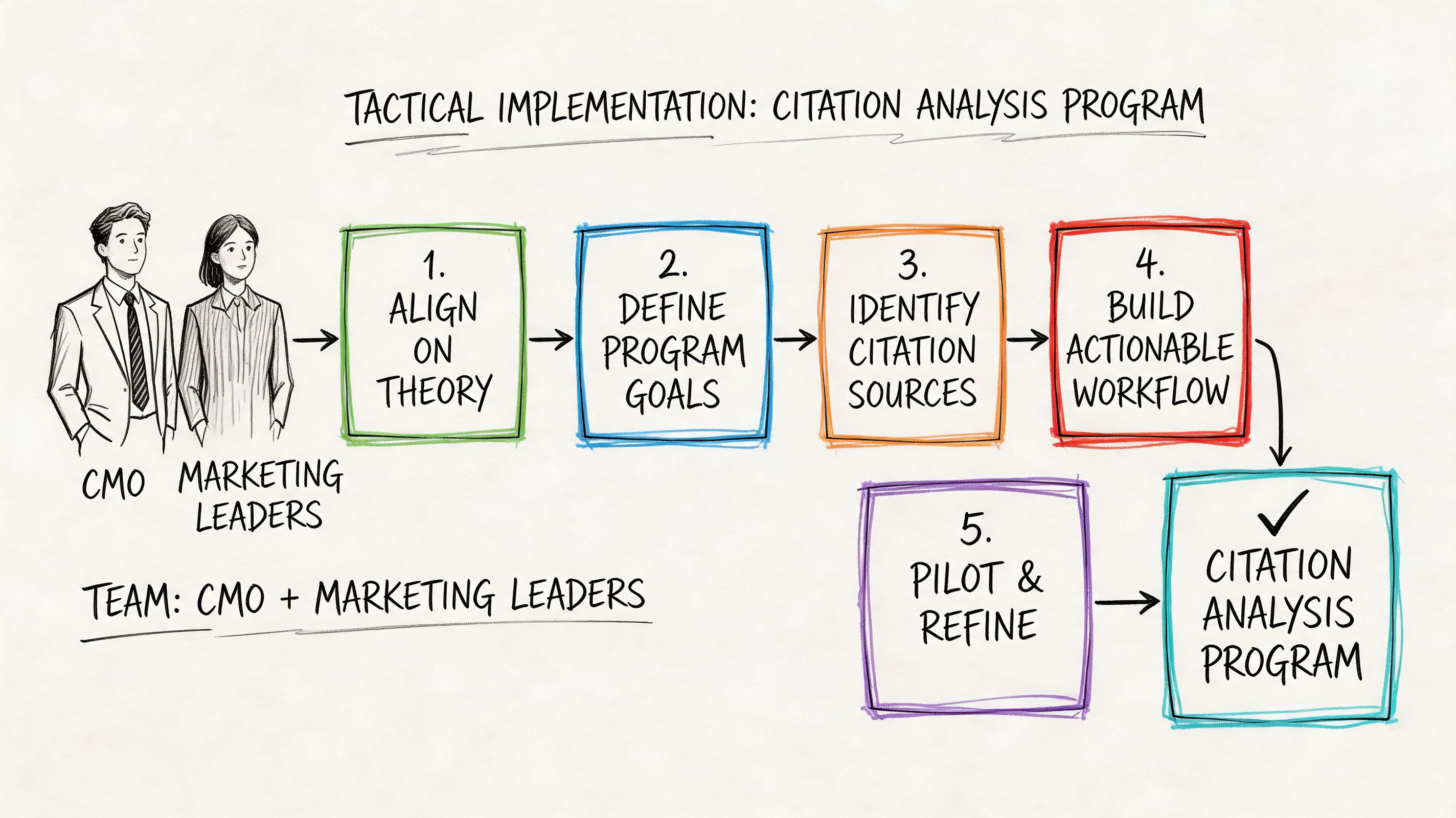

Tactical Implementation for Enterprise GEO

Enterprise citation programs work when testing, content engineering, and source strategy operate as one system. Measurement alone doesn't move visibility.

Many teams fail because they treat AI citation analysis like reporting software. It behaves more like a lab process. The organization needs controlled prompt libraries, repeat runs, and a content response loop tied to observed source behavior.

Enterprise programs need a controlled testing loop

A reliable program starts with repeated execution of the same prompts. The available evidence on commercial AI citation tracking emphasizes running prompts multiple times to control for response variability, and that practice should be treated as baseline discipline rather than optional rigor.

A workable enterprise loop usually includes:

Prompt library control: Segment prompts by research, comparison, and decision intent.

Rerun discipline: Use repeated prompt execution rather than single snapshots.

Evidence capture: Preserve answer text, cited URLs, and timestamped runs.

Competitor gap review: Track which domains appear when the brand does not.

Action routing: Hand insights to content, PR, and technical owners.

This is also where teams should stop expecting one content type to win everywhere.

Intent changes source eligibility

Search Engine Land's analysis of 8,000 citations found that Gemini draws broadly from blogs/news greater than 65%, while Perplexity curates experts with reviews at 9%, and B2B queries are 40% more likely to cite comparison portals, according to its analysis of engine preferences across 8,000 AI citations.

That means enterprise GEO needs intent-specific asset planning. B2B comparison prompts often need inclusion in portals, category lists, and evaluative pages. Broader educational prompts may reward editorial coverage and structured explainers. Teams that publish one generic “ultimate guide” and expect universal citation coverage are building for search-era habits, not LLM retrieval behavior.

Operationalize citation analysis into workflow

The workflow only matters if it changes production. That means connecting measurement to publishing calendars, third-party placement strategy, and structured content updates.

A practical operating pattern looks like this:

Content teams revise owned pages for clearer entity framing and answer extraction.

PR teams pursue placement on domains that repeatedly appear in model outputs.

SEO teams map source gaps by query type rather than by keyword alone.

Leadership teams evaluate whether visibility is improving in the prompts tied to pipeline.

A short visual walkthrough helps illustrate how this process should run inside enterprise teams.

The key shift is operational. Citation analysis becomes valuable only when it drives where the company publishes, what claims it packages, and which outside sources it targets.

,

Teams that need the conceptual groundwork before workflow design should revisit Chapter 1. Enterprises ready to build a managed GEO operating loop can book a call with Algomizer at algomizer.com.

Measurement Governance and The Future of Truth

Citation analysis has moved beyond channel analytics. It now shapes how AI systems represent a company to buyers, journalists, and decision-makers.

That is a governance issue because AI systems don't merely surface facts. They compress them, prioritize them, and restate them in persuasive language. If a brand's source layer is weak, the model will fill the gap with whatever evidence cluster it trusts more.

Citation analysis now belongs in governance

The strongest current business framing is qualitative and operational. Mid-market and enterprise teams using headless-browser measurement and outcomes-based systems report meaningful gains in AI visibility and sales efficiency, including outcomes such as 1500% increases in AI mentions and 31% shorter sales cycles, as described in Search Engine Land's coverage of enterprise-oriented measurement approaches. The exact lesson isn't that every brand will replicate those results. It's that governing AI visibility now affects revenue motion, not just reporting.

Teams exploring adjacent infrastructure can also review Sellm's overview of tracking solutions for AI search, which is useful for understanding the implementation choices around collection and monitoring.

Truth programs need independent verification

A mature enterprise program should create one internal source of truth for AI visibility. That program needs clear ownership, preserved evidence, and verification standards that can be audited outside the marketing team.

A sound governance model includes:

Independent evidence review: Raw answer captures should be inspectable by more than one function.

Version awareness: Model updates can shift citation behavior without warning.

Claim control: Teams should monitor whether AI systems repeat the claims the brand wants associated with it.

Authority strategy: Source development should prioritize where models already look for proof.

The authority layer is especially important. Algomizer's perspective on the weight of authority in GEO aligns with this governance view. Models don't reward brand intention. They reward externally supported truth signals.

A company's AI visibility program becomes strategic when leadership treats citations as representations of corporate truth, not as vanity metrics.

The strategic conclusion is straightforward

The old reporting question was, “Did the brand appear?” The new governance question is, “What truth did the model assemble about the brand, and can the company verify it?”

That shift changes ownership. Marketing still executes much of the work, but the consequences now touch legal review, PR, investor narrative, sales enablement, and executive reputation. The organizations that adapt first will not merely earn more mentions. They will shape the evidence that advanced AI systems reuse when summarizing their market position.

Reliable citation analysis is therefore not a niche practice. It is the measurement layer for machine-mediated reputation.

Algomizer helps brands turn that measurement layer into an operating system for AI visibility. The team handles prompt discovery, citation tracking, content engineering, media placement, and ongoing calibration across major AI platforms, using independently verifiable methods built for enterprise reporting. Brands that want a complimentary visibility assessment or a deeper review of their AI citation posture can book a call with Algomizer.