How to Generate Real Estate Leads Online: An AI-First Plan

Learn how to generate real estate leads online with an AI-first strategy. Our guide covers GEO, content engineering, and automation for the new era of search.

The standard playbook for how to generate real estate leads online is now economically inefficient. More social posts create more surface area, and more paid clicks can still create short bursts of traffic, but neither tactic is designed for the systems that increasingly mediate discovery. Buyers now ask AI systems for agent recommendations, neighborhood context, pricing guidance, and next steps. The first competition is no longer a ranked list of links. It is inclusion inside a synthesized answer.

That shift changes what content must do. A real estate site is no longer judged only by keyword targeting or ad budget. It is judged by whether its pages contain enough verifiable, well-structured evidence for retrieval systems to extract, compare, and cite. Our firm refers to that unit as an Evidence Cluster. It is a connected body of neighborhood pages, market explainers, transaction proof, agent expertise, and conversion assets engineered for machine trust.

This is the core premise behind modern LLMO, or large language model optimization. In real estate, visibility now depends less on publishing isolated tips and more on building source material that answer engines can reuse without ambiguity.

The commercial implication is straightforward. Teams that keep renting attention through feeds and ads remain exposed to rising acquisition costs and weak recall. Teams that publish citation-ready evidence can turn generative AI into a recurring discovery channel, then convert that visibility through structured intake. For teams focused on the handoff from discovery to inquiry, Orbit AI for real estate agents provides a useful reference for modern lead capture workflows.

This article examines the architectural reason this shift is happening, the content framework that fits it, and the economic model that makes it superior to the older lead generation stack.

Table of Contents

Executive Summary A New Playbook for a New Internet

The old playbook buys exposure. It rarely earns citation.

The Evidence Cluster is the new unit of visibility

The Architectural Shift from Search Engine to Answer Engine

RAG changes retrieval from ranking pages to assembling evidence

Why a lower-authority site can still win citations

Discovery is now an authority engineering problem

The Evidence Cluster Our Framework for AI Dominance

A neighborhood cluster is the highest-leverage build

Structure determines whether AI can trust the page

The cluster must convert after it earns visibility

An Economic Analysis of Lead Sources Old vs New

Tactical Deployment Automation and Engagement

Deployment speed determines whether AI visibility becomes a lead

The correct automation model starts with page-level intent

Qualification scripts should reduce uncertainty

Automation should route by evidence, not by convenience

Reframing Success Measurement in the AI Era

Traffic is no longer the primary score

Three KPIs matter more than vanity metrics

Executive Summary A New Playbook for a New Internet

Real estate marketing advice still overweights channels that rent attention. Posting schedules, portal lead packages, and PPC campaigns can produce activity, but they do not give a brokerage durable presence inside AI-generated answers. The internet has shifted faster than the playbook.

Buyers now ask systems for synthesized recommendations, not just lists of links. That change alters lead generation at the retrieval layer. A brokerage is no longer competing only for a click. It is competing to become one of the sources an answer engine selects, trusts, and cites.

That distinction defines the new operating model. Firms that still treat content as promotional output will keep paying for visibility one impression at a time. Firms that engineer evidence will accumulate visibility that compounds across prompts, topics, and local intents.

The old playbook buys exposure. It rarely earns citation.

The standard stack is easy to recognize. A team buys portal leads, runs paid search, posts listing graphics, and publishes broad blog posts designed around generic keywords. Those tactics can create traffic spikes and sporadic inquiries. They do not reliably place the brokerage inside an AI response to a high-intent query about a neighborhood, school commute, pricing trend, or agent choice.

As noted earlier, online discovery now dominates the beginning of the home search process. The implication is straightforward. Distribution alone is no longer the bottleneck. Trustworthy retrieval is.

This is the core principle behind LLMO, or large language model optimization. AI systems do not reward the loudest publisher. They reward the clearest, most corroborated, and most extractable source material.

Practical rule: In AI search, unsupported claims behave like advertising. Claims surrounded by structured proof behave like evidence.

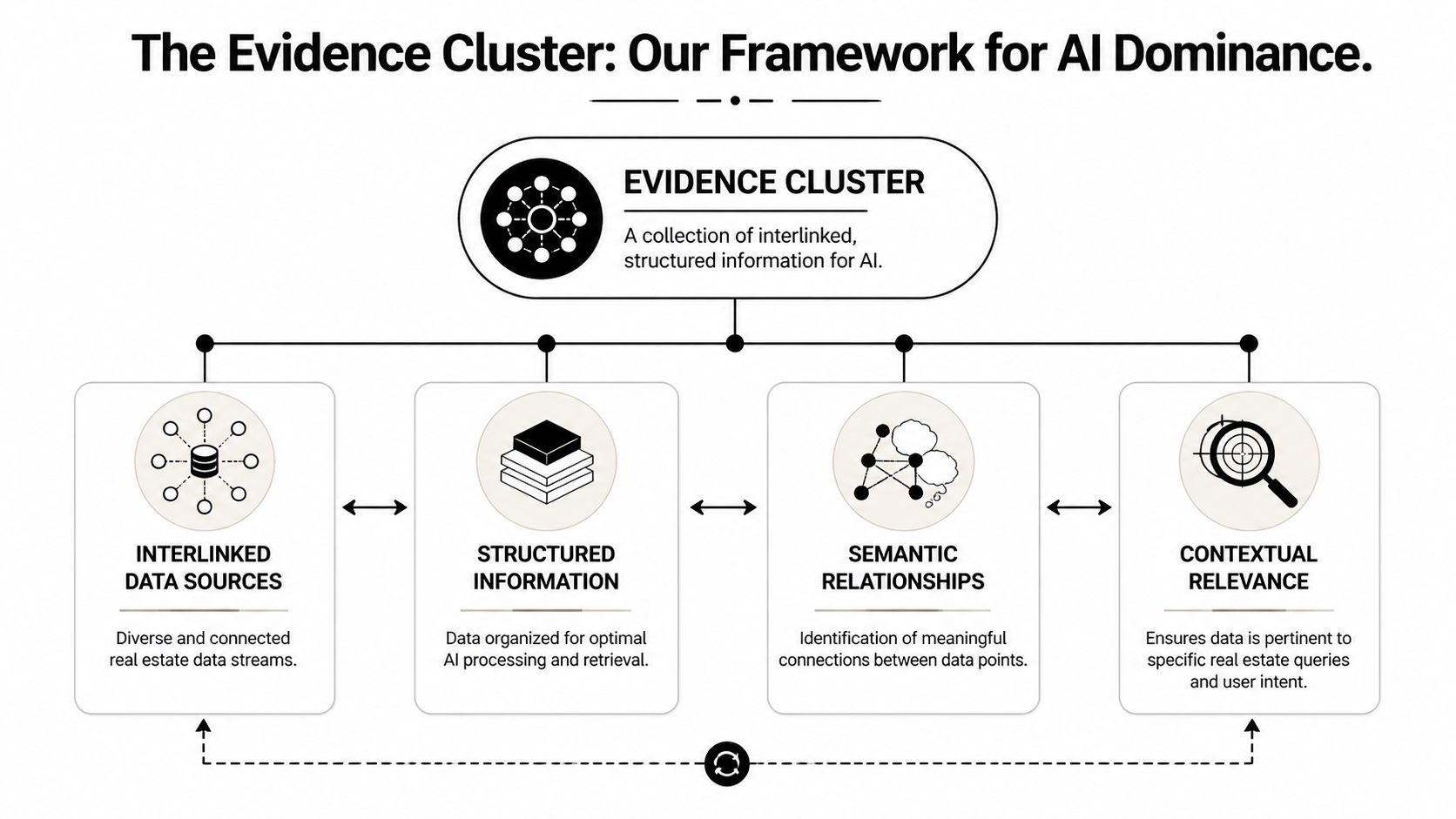

The Evidence Cluster is the new unit of visibility

A single page is too brittle for answer engines. The stronger asset is a connected body of proof around one topic and one geography. In real estate, that usually means a neighborhood guide, local market commentary, agent expertise tied to that area, listing media, FAQs, and lead capture infrastructure that all reinforce the same entity relationships.

Our research team refers to this structure as an Evidence Cluster. The term matters because it changes execution. Content planning stops at keyword coverage and starts with a harder question. What set of facts, media, references, and page relationships gives a model enough confidence to reuse your material in an answer?

That shift produces three operational consequences:

Authority becomes compositional: visibility rises when pages, schema, media, and local entities support each other around one market segment.

Content quality becomes structural: strong prose helps, but retrieval systems depend more on consistency, formatting, and corroboration.

Lead generation becomes downstream of answer inclusion: if the brand is absent from the answer layer, conversion starts after the highest-intent moment has passed.

Citation without capture still wastes demand. That is why the evidence layer and the conversion layer have to be built together.

The result is a different definition of online lead generation. The objective is not more content, more posting, or more ad spend. The objective is to publish evidence that answer engines can ingest, verify, and route toward conversion.

The Architectural Shift from Search Engine to Answer Engine

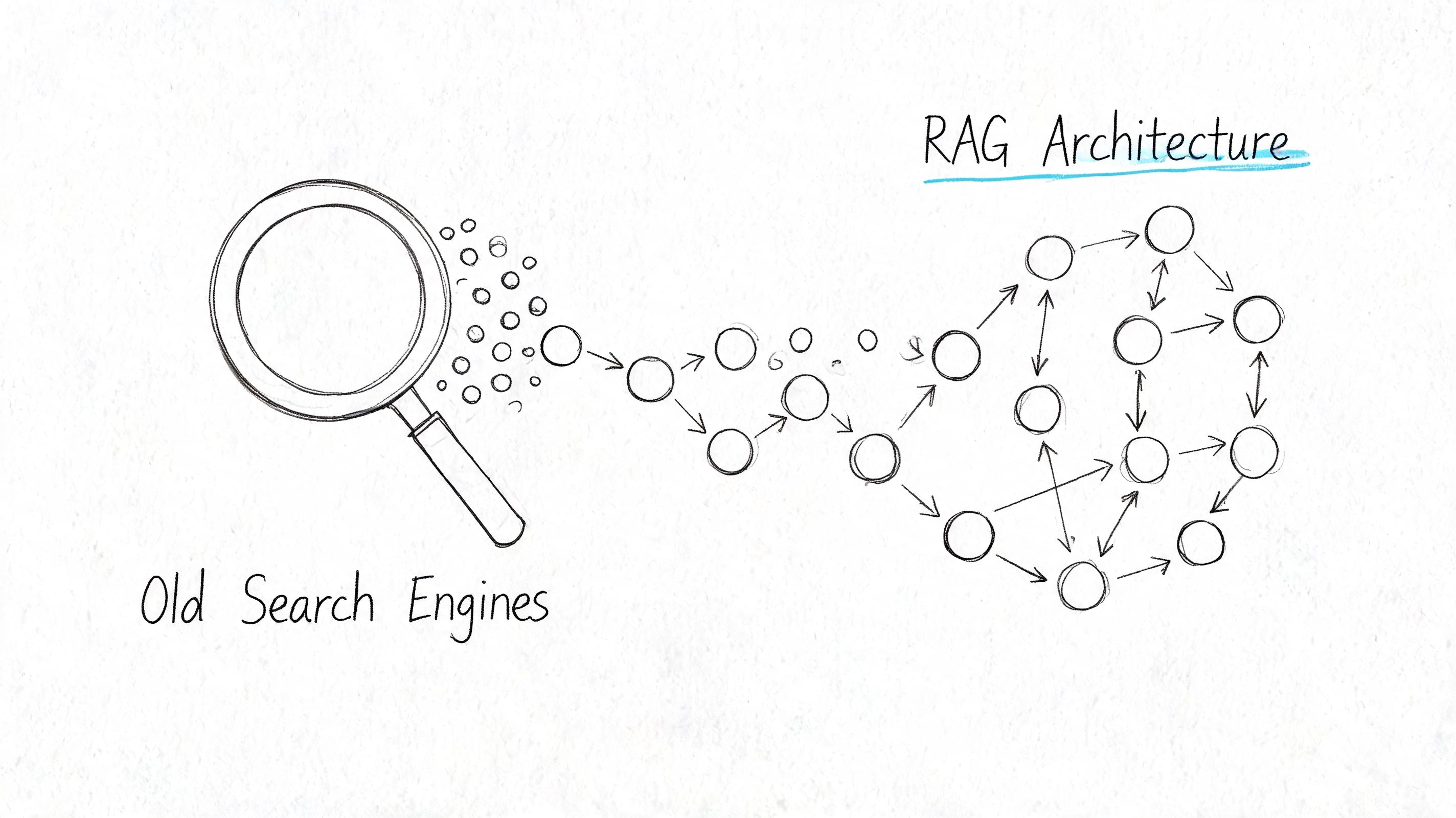

RAG changes retrieval from ranking pages to assembling evidence

AI answer systems don't behave like a static results page. They pull documents, passages, and entities into a temporary working set, then compose a response from the most usable fragments. That means the retrieval target isn't merely "a page that ranks." It is "a chunk of evidence that survives extraction."

A conventional search engine primarily returns links and snippets. A retrieval-augmented generation system does more. It identifies relevant passages, weighs semantic alignment, checks whether the passage resolves the user's intent, and then synthesizes a direct answer. That shift is why AEO vs SEO vs GEO is no longer a theoretical distinction for real estate marketers. It is an operational one.

For real estate, that architecture rewards content with four properties:

Discrete factual units that can stand alone when extracted.

Clear entity relationships between neighborhood, property type, agent, and buyer intent.

Structured formatting that helps the model isolate definitions, comparisons, and answers.

Corroboration across assets so the system encounters the same truth in multiple places.

Why a lower-authority site can still win citations

Many teams assume a large portal or a high-authority local site will always dominate AI results. That isn't how retrieval works in practice. A smaller site can win when it presents cleaner evidence on a narrower topic.

A generic city page usually underperforms a tightly built neighborhood guide because the latter offers more specific retrieval material. If a buyer asks, "Which neighborhood fits a family relocating for shorter commutes and stronger schools?" the system needs passages that connect lifestyle, commute logic, and local context. Pages optimized only for a broad head term rarely do that.

AI systems reward answerable structure. They don't reward publishing effort by itself.

That is why many 2024-era SEO articles disappear inside answer engines. They were written to signal relevance to a ranking system, not to provide modular evidence to a synthesis system. Headings were broad. Claims were unstructured. Context lived in long paragraphs rather than extractable units.

Discovery is now an authority engineering problem

The practical consequence is severe. Real estate discovery has shifted from keyword matching to trust assembly. A brokerage has to engineer content so that the model can confidently include it without introducing ambiguity.

That changes editorial decisions:

Pages need narrower scopes: One page should solve one intent cluster cleanly.

Media needs context: A video tour is more useful when tied to location, buyer type, and property characteristics.

Internal links need meaning: Linking a neighborhood guide to active listings, FAQs, and valuation pages creates a graph, not just navigation.

This is the hidden reason for widespread difficulty in generating real estate leads online from organic channels. This difficulty arises because optimization efforts still focus on a browser session while buyers increasingly begin with a generated answer.

The Evidence Cluster Our Framework for AI Dominance

A neighborhood cluster is the highest-leverage build

The most productive place to start is a neighborhood. It gives the brokerage a stable topic, recurring buyer intent, and enough local variables to demonstrate real expertise. A single high-quality neighborhood guide can become the anchor for every adjacent asset.

The benchmark is strong enough to justify this approach on its own. A methodology built around SEO-optimized neighborhood guides can generate 5 to 10 qualified leads monthly per page after 3 to 6 months, and guides that rank in the top 3 can see 20 to 30% lead form submission rates when they are built as 2,000+ word guides with schema markup, according to this real estate lead generation methodology.

That fact is usually interpreted as an SEO opportunity. It is more useful as an AI retrieval opportunity. A long-form neighborhood guide works because it contains enough context for a model to answer multiple related questions from a single asset.

Structure determines whether AI can trust the page

An Evidence Cluster is not one long article. It is a set of linked proof objects designed for retrieval. For a target neighborhood, the core cluster usually includes:

Anchor guide: A detailed neighborhood page covering schools, commute patterns, amenities, housing stock, and market context.

Market evidence layer: Supporting reports or updates that explain price movement and inventory conditions in plain language.

Entity proof: Agent bio pages, Google Business Profile alignment, and local experience signals tied to the same geography.

Media layer: Video walkthroughs, map-based orientation, and property explainers with descriptive text.

Conversion surface: CMA offers, registration forms, showing requests, and email capture attached to the informational asset.

Structured data matters. Schema such as LocalBusiness, Article, and FAQPage doesn't create authority by itself. It reduces ambiguity. It tells the machine what the page is, who it refers to, and how one object relates to another.

A strong cluster also avoids generic writing. Thin local pages usually fail because they repeat obvious claims instead of presenting useful distinctions. "Great schools and nice parks" is weak evidence. A page that differentiates commute logic, buyer fit, and neighborhood tradeoffs is stronger because it helps the model answer comparative questions.

Operational standard: Every paragraph should either define, differentiate, verify, or route the user to the next decision.

A helpful adjacent resource for teams extending valuation assets from these clusters is AI for real estate CMAs, especially when seller capture is part of the content architecture.

A short visual walkthrough helps clarify how cluster logic works in practice.

The cluster must convert after it earns visibility

Visibility without a capture path is wasted demand. The Evidence Cluster therefore includes conversion mechanics directly on informational pages. The most effective pattern is simple: answer the research question fully, then offer the next object the user is seeking.

That next object varies by intent:

A relocation buyer wants a shortlist, not a generic newsletter.

A seller wants a valuation frame, not a splashy homepage.

An investor wants market explanation, not branding language.

Many brokerages fail at this point. They publish educational content, then send every visitor to the same contact page. AI-first lead generation requires intent-matched conversion surfaces inside the cluster itself. The page that earned trust has to complete the handoff.

An Economic Analysis of Lead Sources Old vs New

Lead source economics changed when search shifted from ranked lists to synthesized answers. In the old model, a brokerage could rent attention and treat visibility as a media buying problem. In the new model, generative systems reward evidence density, citation clarity, and topical coverage. That change matters because lead cost is no longer the only variable. Retrieval durability now determines whether acquisition spend behaves like rent or like infrastructure.

Paid channels still have a place. Zillow leads can cost $50 to $180 each depending on market value, according to AgentZap's real estate lead statistics and portal cost data. Analysts should read that number carefully. It measures access to demand inside a third-party environment, not ownership of the demand source. Once spend pauses, placement disappears, and the acquisition system resets.

For teams still assessing paid acquisition mechanics, this real estate PPC guide is a useful tactical reference. The strategic constraint is different. PPC and portal spend can buy visits, but they rarely build a retrievable knowledge asset that AI systems continue to cite after the campaign ends.

Evidence Clusters change the unit economics because they are built for retrieval, not interruption. A well-structured cluster accumulates entity associations, internal corroboration, and intent-matched pages over time. That architecture gives large language models more reasons to trust the brokerage as a source on neighborhoods, pricing logic, school boundaries, relocation patterns, and transaction questions. Teams that want that visibility should study the mechanics behind optimizing content for AI Overviews and answer engines, because distribution increasingly follows machine selection before human clicks.

The comparison is straightforward.

Channel | Avg. Cost Per Lead | Lead Intent | Long-Term Asset Value |

|---|---|---|---|

Zillow Premier Agent | $50 to $180 per lead based on market value, per AgentZap data cited above | Usually high because the user is actively browsing property portals | Low. Lead flow depends on continued spend and platform access |

Google PPC | Qualitatively variable | Often high for direct search intent, but traffic quality depends on query control and landing page match | Low to moderate. Campaign learning can improve efficiency, but traffic usually stops when spend stops |

Social media ads | Qualitatively mixed | Often earlier-stage intent unless the offer is highly specific | Low. Reach is rented and audience attention decays quickly |

Evidence Cluster model | Upfront content and technical investment rather than a fixed cited CPL | High when the page answers a specific neighborhood or transaction question with verifiable detail | High. The cluster remains an owned asset that can continue earning citations and leads |

The non-obvious advantage sits on the balance sheet. Paid media buys a stream of opportunities. Evidence buys a position in the market's knowledge layer.

That distinction becomes more important as AI intermediates discovery. If a brokerage publishes generic blog posts, it gets little compounding value because generic content is easy to replace. If it publishes Evidence Clusters with original local proof, structured corroboration, and clear conversion paths, it creates a hard-to-displace asset. The lead source then shifts from purchased exposure to recurring citation.

The brokerage that owns the evidence controls a larger share of future demand, because AI systems keep returning to sources that are specific, corroborated, and easy to retrieve.

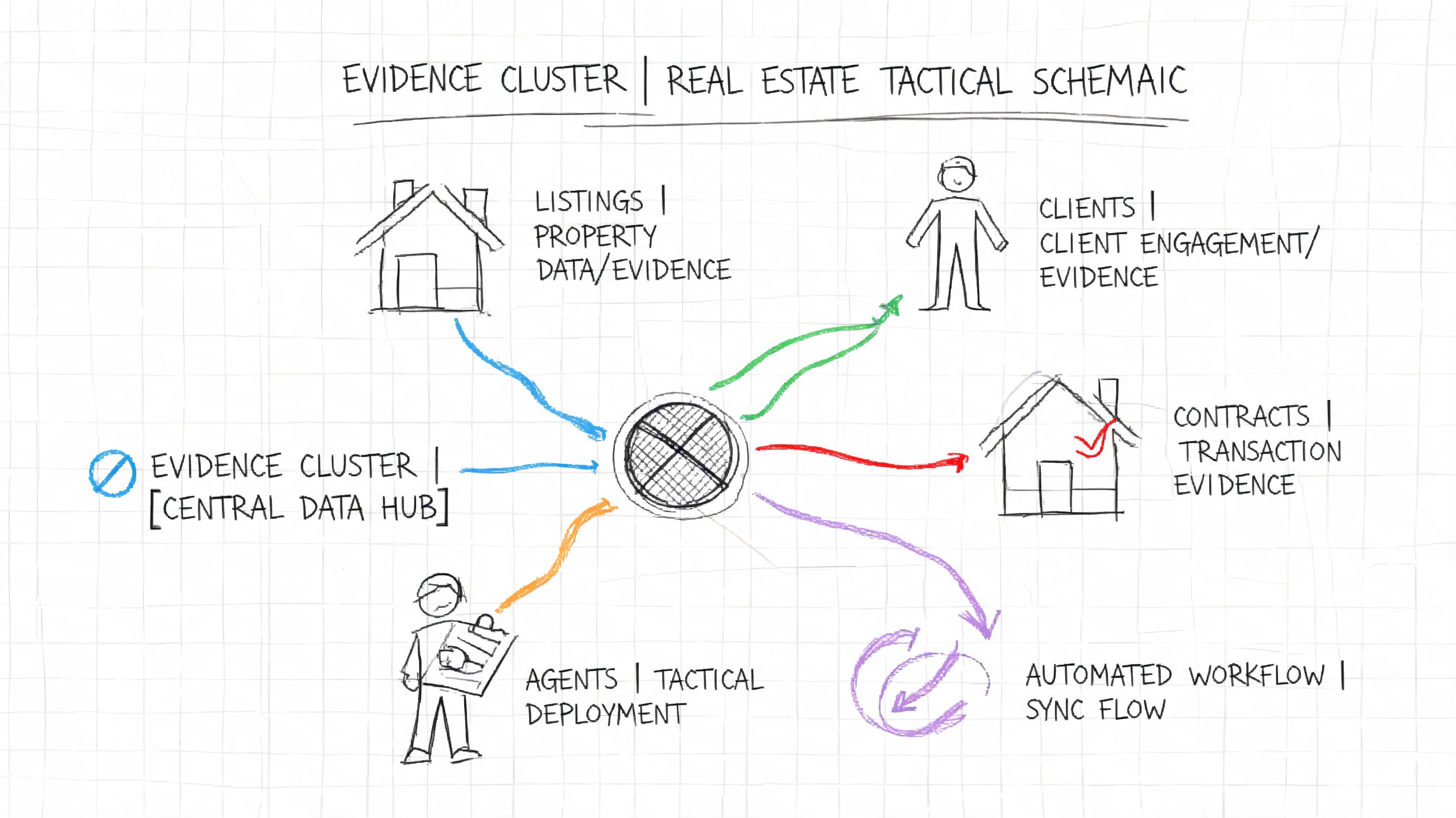

Tactical Deployment Automation and Engagement

Deployment speed determines whether AI visibility becomes a lead

An Evidence Cluster can win discovery and still fail commercially if the handoff breaks. In real estate, intent decays fast because the query usually maps to an active decision: tour timing, financing readiness, price expectations, or neighborhood fit. If the site captures that demand but routes it slowly or vaguely, the brokerage loses the advantage it just earned in the answer layer.

The operational conclusion is straightforward. Follow-up must be designed as an automated system with human escalation, not as a notification that waits for agent attention. This section matters for any team studying how to generate real estate leads online, because online lead generation is not a publishing problem alone. It is a routing, qualification, and response-timing problem.

The correct automation model starts with page-level intent

The highest-performing setup ties every conversion path to the specific Evidence Cluster page that produced it. A generic "contact us" workflow throws away the very signal that made the visitor convert. A lead from a page about school-zone tradeoffs in one neighborhood is different from a lead arriving from a condo pricing page or a relocation guide. The system should preserve that context from the first form field through agent assignment.

The stack is simple. A CRM stores the record and ownership logic. Trigger-based automation moves the lead instantly into SMS, email, or chat. A conversational intake layer collects the missing details that the form should not force upfront. The same information architecture that improves citation likelihood in AI systems also improves conversion architecture on site. Teams that are refining answer-layer visibility should study optimization patterns for AI Overviews, because retrieval clarity and routing clarity depend on the same discipline: explicit entities, explicit intent, and explicit next steps.

A practical chain looks like this:

Lead enters through an Evidence Cluster page. The form captures transaction type, location, and timing with fields tied to the page topic.

CRM creates the contact and preserves source context. Tags should identify the exact cluster, neighborhood, and probable intent category.

An acknowledgement message sends immediately. The message should confirm receipt and present one clear next action, not a menu of options.

Chat or SMS asks a short qualification sequence. Three questions are usually enough to identify urgency, financing or valuation status, and geographic focus.

Assignment follows specialization rules. Buyer inquiries, seller inquiries, investor inquiries, and relocation inquiries should not enter the same queue if the team has role or neighborhood specialization.

Human outreach follows the qualification signal. Automation handles speed and data capture. Agents handle ambiguity, trust, and conversion.

Qualification scripts should reduce uncertainty

The script does not need personality. It needs precision.

Weak chatbot flows copy consumer app language and ask broad questions that produce broad answers. Strong flows narrow the decision path quickly. That means every prompt should help classify urgency, local fit, or transaction readiness.

A buyer-side opener can be direct:

"Thanks for reaching out about homes in [Neighborhood]. Are you planning a move soon, comparing areas, or still in the research stage?"

A seller-side opener should classify the commercial objective:

"Thanks for requesting information about your area. Are you looking for a current pricing estimate, preparing for a listing conversation, or planning a sale later?"

These distinctions shape the next action. Relocation buyers need area comparison assets. Active buyers need scheduling and inventory alerts. Sellers need valuation context, positioning guidance, and a path to consultation.

Automation should route by evidence, not by convenience

Many brokerages still send every web lead into a general pond and call that efficiency. It is the opposite. A pooled queue ignores the source evidence already collected by the page, the form, and the first reply. The better model scores and routes leads using observable signals: origin cluster, geography, stated timeline, property type, and requested action.

That design creates a second-order advantage. It improves response relevance, and it also improves the training signal for future optimization. Over time, the team can identify which Evidence Clusters produce high-intent seller inquiries, which neighborhoods generate low-friction buyer consultations, and which pages attract research traffic that belongs in a longer nurture path. That feedback loop is what turns content into an engineered acquisition system instead of a publishing calendar.

The tactical lesson is clear. AI-first lead generation succeeds only when answer visibility, capture architecture, and response logic operate as one system.

Reframing Success Measurement in the AI Era

Traffic is no longer the primary score

Most real estate marketing dashboards still prioritize sessions, clicks, and social engagement. Those metrics describe activity. They don't adequately describe discoverability inside AI systems or the commercial value of that discoverability.

That blind spot is already visible in the market. A key gap in existing guidance is the lack of a framework for attributing ROI from organic channels, especially when teams must choose between long-horizon SEO work and immediate paid channels over 12 to 18 months, as noted in this analysis of real estate lead generation gaps. The missing layer isn't more traffic reporting. It is answer-layer attribution.

Three KPIs matter more than vanity metrics

A better system measures whether the brand appears inside the decision layer and whether that visibility produces qualified motion. Three KPIs are more useful than generic traffic totals.

KPI | What it measures | Why it matters |

|---|---|---|

Share of Answer | How often the brand or content appears in AI-generated responses for target real estate prompts | This shows whether the brokerage is present where discovery now starts |

Citation Quality | Whether the brand is cited as a primary authority, supporting reference, or indirect mention | Placement quality affects trust and downstream conversion |

Lead Velocity from Citation | How quickly users exposed to citation-driven entry points become qualified leads | This ties answer visibility to operational sales outcomes |

These KPIs change management behavior. Teams stop celebrating broad traffic lifts that don't create pipeline quality. They start investing in topic clusters, entity consistency, and intent-matched capture because those factors improve visibility where intent is concentrated.

The broader conclusion is simple. Real estate lead generation is no longer a channel problem. It is a knowledge engineering problem. The brokerages that treat their expertise as structured evidence will control the answer layer. The ones that don't will keep renting demand from platforms that own the relationship.

Teams that want a measurable plan for AI search visibility can book a call with Algomizer. Chapter 1 thinking applies here as well. The objective isn't more content. It's building answer-layer visibility that can be verified, attributed, and tied to lead flow.