AEO vs SEO vs GEO: Enterprise Search Strategy

AEO vs SEO vs GEO: Compare traditional, answer, and generative search engines. Understand tactical differences to build your enterprise strategy.

Subtitle: Optimizing the indexed web, the answer layer, and the generative knowledge layer

Date: April 22, 2026

Chapter 1

Executive summary: The most common advice about aeo vs seo vs geo is wrong. These aren't adjacent tactics inside one channel. They are optimization disciplines for three different machine layers. SEO targets retrieval from the indexed web. AEO targets extraction into direct answers. GEO targets citation inside generative synthesis. That distinction matters because Google's AI Overviews cut click-through rates for top-ranking content by 34.5% while AI referrals to top websites rose 357% year over year, according to LLMRefs. The architecture changed. Strategy has to change with it.

AI visibility is still treated as a feature of SEO. That frame is too narrow. A page can rank well and still lose the answer. A page can win the answer and still fail to shape the model's synthesis. In the new retrieval stack, the question isn't just "How does a page rank?" It's "Which system is making the selection?"

The practical consequence is straightforward. Marketing leaders now compete across multiple discovery surfaces at once. Anyone still operating with a single-channel search playbook is defending traffic while competitors are engineering recall across search engines, answer engines, and LLMs.

Discipline | Layer targeted | Machine outcome | Primary success condition |

|---|---|---|---|

SEO | Indexed web | Ranking in traditional results | Crawlability, relevance, authority |

AEO | Answer layer | Selection for direct answers and overviews | Structured clarity, concise extraction |

GEO | Generative synthesis layer | Citation, recommendation, narrative influence | Citable facts, entity consistency, semantic depth |

Table of Contents

The Search Landscape Is Fracturing

Three systems now decide visibility

Clicks are no longer the whole market

Defining the Battlefield SEO AEO and GEO

SEO targets the web index

AEO targets extractable answers

GEO targets synthesis and citation

A Mechanical Comparison of Retrieval and Ranking

SEO retrieves documents

AEO extracts answer blocks

GEO assembles evidence clusters

The Tactical and Measurement Divergence

Operations diverge before reporting does

Measurement shifts from visits to presence

Tactical & Measurement Comparison: SEO vs. AEO vs. GEO

Building Your Integrated Search Strategy

The visibility stack allocates by business model

Priority depends on buying behavior

Winning in the Post-Search Era

The destination is source status

Authority now means machine recall

The Search Landscape Is Fracturing

Search is no longer a single market with one gatekeeper and one success metric. Enterprise visibility now depends on how machines retrieve, compress, and reuse information across multiple systems.

That change matters because companies are still allocating budget as if every discovery path ends with a ranked blue link. It does not. Some systems return documents. Some extract answer fragments. Some synthesize a response from distributed evidence and cite selectively, or not at all.

Three systems now decide visibility

The common mistake is to treat AEO and GEO as minor extensions of SEO. The mechanics do not support that view. Each discipline targets a different layer of the retrieval stack, and each layer applies different selection criteria.

SEO still governs performance in the indexed web. AEO affects whether content can be parsed into direct-response formats. GEO shapes whether a model recognizes your material as usable source input during synthesis. Our explanation of how GEO works in modern AI retrieval systems covers that source-selection layer in more detail.

This framing changes strategy. The question is not which acronym wins. The question is which layer of the stack matters most for your category, buying journey, and margin structure.

Semantic structure sits near the center of this shift. Retrieval systems no longer rely on literal term matching alone, especially in high-intent or ambiguous queries where concept matching and entity relationships matter more. Contesimal's analysis of semantic search vs keyword search is useful here because it explains why machine interpretation often outranks raw keyword repetition.

Practical rule: Treat visibility as a stack problem. SEO targets the index, AEO targets the answer layer, and GEO targets the model's source knowledge base.

Clicks are no longer the whole market

What changed is not demand. It is the interface through which demand gets resolved.

As noted earlier, AI-generated answer formats are reducing the share of discovery that ends in a site visit while increasing the share that happens inside summaries, overviews, and assistant responses. For operators, that creates a measurement problem first and a traffic problem second. A brand can shape consideration without receiving the click that would have made that influence visible in legacy reporting.

The strategic implications are straightforward:

SEO protects indexed discoverability. It keeps your documents retrievable and competitive in conventional search results.

AEO improves answer extraction. It increases the odds that your content supplies the response users see before they decide to click.

GEO improves source inclusion. It increases the odds that generative systems draw from your material when assembling a synthesized answer.

A CMO should read this split clearly. Presence, attribution, and visits are no longer the same metric viewed from different angles. They are separate outcomes produced by separate retrieval systems.

Organizations that still evaluate search only through session growth are undercounting influence where AI interfaces intercept the query and overcounting success in channels that still produce a click.

Defining the Battlefield SEO AEO and GEO

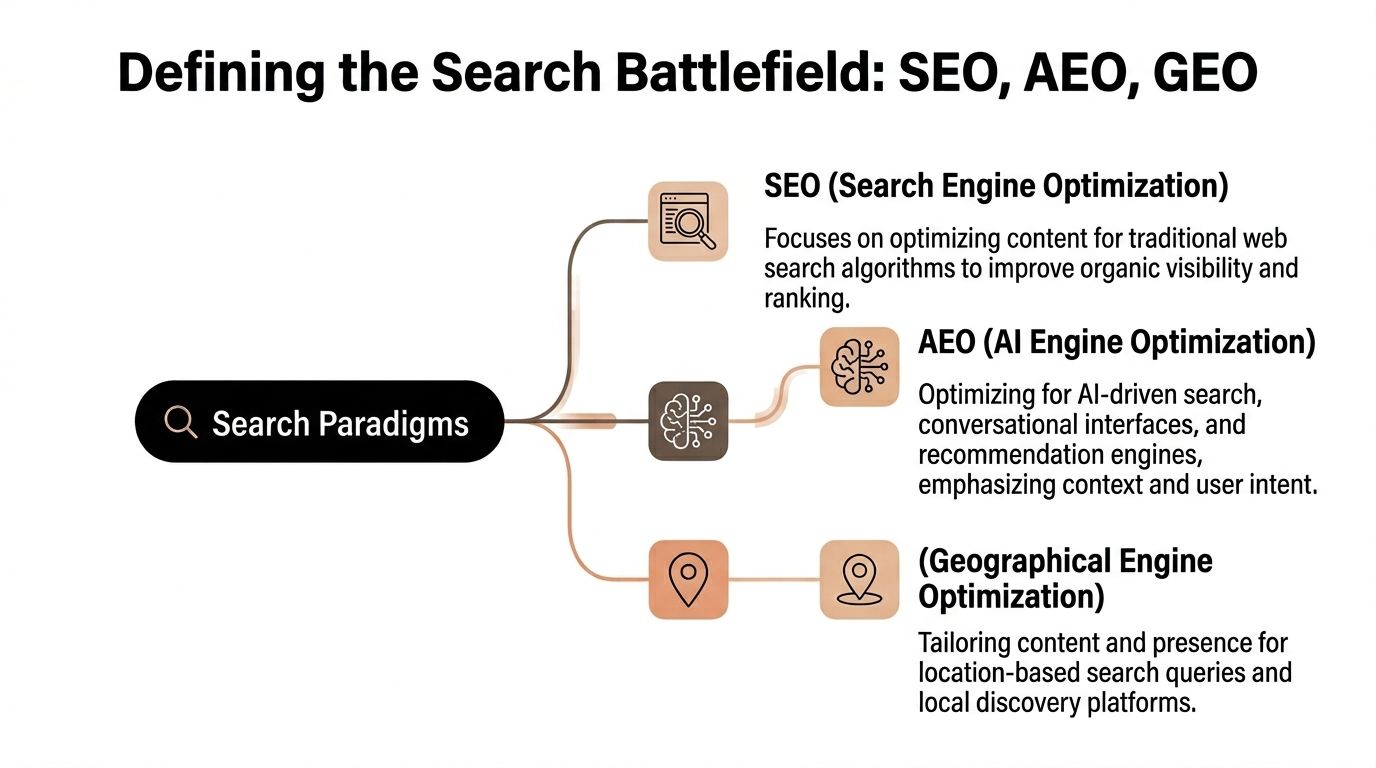

The cleanest way to understand aeo vs seo vs geo is to stop defining them by tactics. Each one targets a different retrieval layer inside the modern information stack.

A simple visual helps anchor the distinction.

SEO targets the web index

SEO optimizes for document retrieval in engines such as Google. Its job is to help a page enter the right candidate set and rank well once retrieved. That means technical health, internal structure, relevance signals, and authority signals still matter.

The most concise definition is this. SEO optimizes the library shelf. It determines whether the book is cataloged, where it's placed, and whether the librarian can find it quickly.

AEO targets extractable answers

AEO optimizes for systems that don't want to show ten links first. They want a short answer they can safely extract and present. That includes featured snippets, voice interfaces, and AI Overviews.

The mechanics are different from SEO. According to Vizup, SEO prioritizes technical health, backlinks, and long-form keyword content, while AEO demands Schema, concise Q&A, and question-based structures for zero-click visibility, and GEO requires data-rich, research-backed content with expert authority for citation in generative platforms like ChatGPT. That isn't a minor tactical variation. It's a different selection objective.

AEO is the index card in the library analogy. It doesn't need the whole book. It needs a clean, extractable statement that answers the question directly.

Later in the workflow, teams usually need a more technical explanation of generative optimization mechanics. This breakdown of how GEO works is useful because it separates AI citation logic from traditional search ranking logic.

GEO targets synthesis and citation

GEO optimizes for the evidence layer used by generative systems. A model doesn't just rank a page and display it. It synthesizes across sources, resolves conflicts, and produces an answer in its own language. GEO therefore targets source eligibility, not just visibility.

That makes GEO the reference book the librarian consults while writing the answer. The content must be clear enough to parse, specific enough to cite, and authoritative enough to survive synthesis.

A practical mental model works well here:

SEO asks: Can the engine find and rank this page?

AEO asks: Can the interface extract a short answer from it?

GEO asks: Can a model use this as trusted source material?

The video below is a useful companion for teams aligning stakeholders around this layered model.

Layer | Optimization discipline | What the machine wants | Typical output |

|---|---|---|---|

Retrieval index | SEO | Relevant, authoritative documents | Ranked links |

Answer interface | AEO | Short, structured, extractable passages | Snippets, overviews, voice answers |

Generative synthesis | GEO | Verifiable source material and coherent evidence | Citations, mentions, recommendations |

Back to Chapter 1. Book a call with our strategy team at Algomizer.

A Mechanical Comparison of Retrieval and Ranking

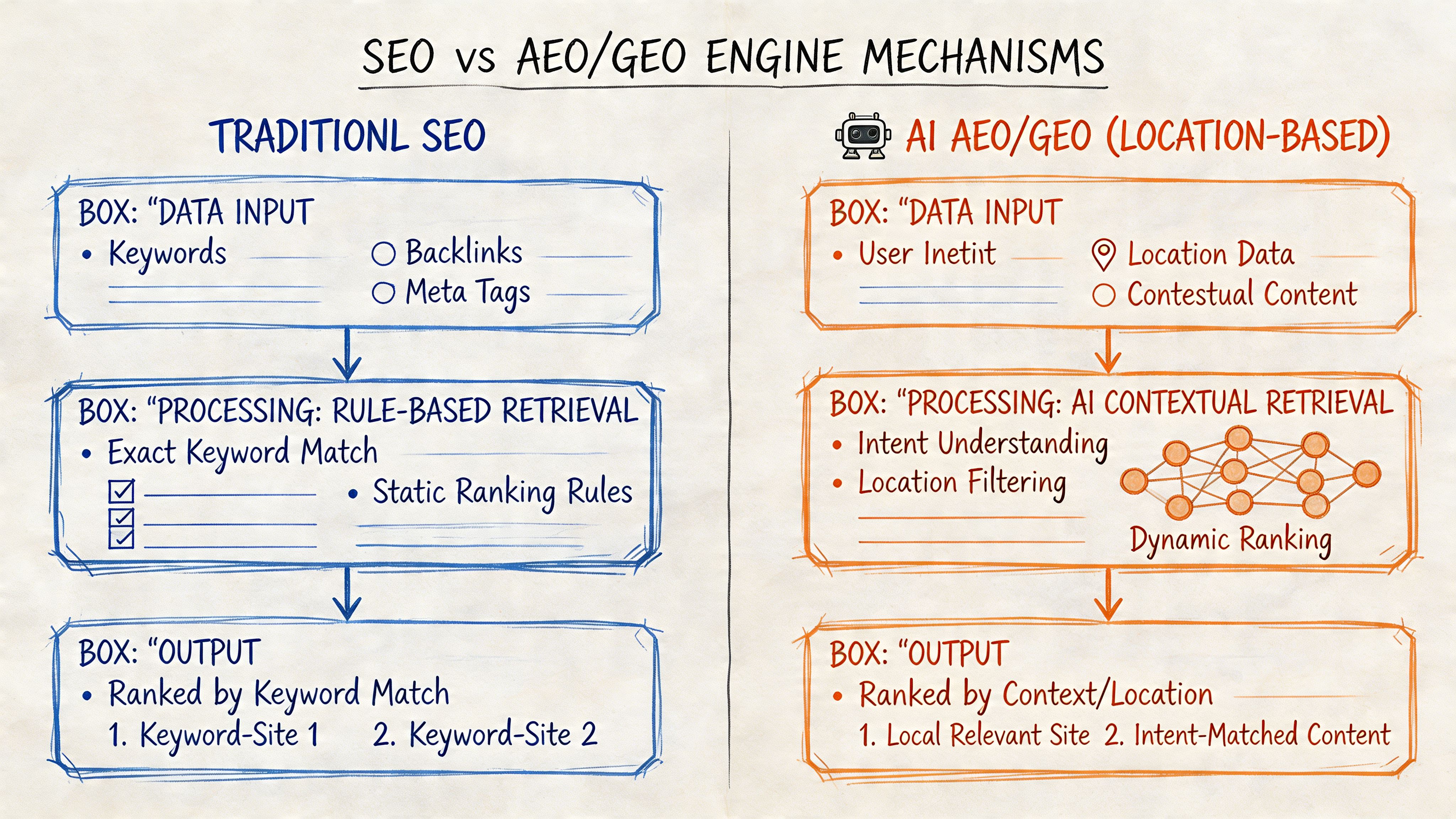

The most useful way to compare aeo vs seo vs geo is by looking at what each system retrieves. The retrieval unit changes. So do the winning signals.

SEO retrieves documents

Traditional SEO operates on document retrieval and rank ordering. The engine crawls, indexes, clusters, and ranks pages based on relevance and authority. A strong backlink profile can still improve candidate selection because the system is evaluating documents as documents.

That does not mean a high-authority page automatically wins in AI contexts. It only means the page has stronger odds of ranking in a web index. The retrieval object is the page.

AEO extracts answer blocks

AEO works differently because the system doesn't always want the full page. It wants an answer block. Semantic HTML, FAQ structure, Schema markup, and concise question-based copy reduce extraction friction.

This changes what content teams should ship. Long-form still has value, but only if it contains highly legible sections that can be lifted into a direct answer without heavy rewriting. In practical terms, AEO rewards pages that are chunked into machine-readable response units.

AEO performance often comes down to whether a machine can isolate one paragraph, one list, or one table without guessing what the author meant.

GEO assembles evidence clusters

GEO is governed by generative retrieval and synthesis. In many AI workflows, the model or connected retrieval system identifies semantically relevant passages, compares sources, and then composes a response. This is why document authority by itself loses force. The system cares about evidence usability.

A useful external explainer on this broader shift is Documind's overview of modern information retrieval methods, especially for teams trying to understand vector-based recall and passage-level retrieval.

The operative concept here is Evidence Clusters. This is the set of verifiable facts, structured entities, supporting tables, and clear assertions that travel together well in machine retrieval. A page with dense Evidence Clusters gives a model more usable material than a page built around generic authority language.

That distinction helps explain benchmark results. In iBeam Consulting's comparison, GEO-optimized content achieved 3-5x higher incorporation into synthesized responses than traditional SEO pages, with 40-60% visibility in generative summaries versus 10-20% for SEO and 25-35% for AEO. Those numbers reveal something deeper than channel performance. They show that synthesis engines reward citable composition, not just rankable publication.

A second implication follows. Brand teams should stop asking only whether a page is authoritative. They should ask whether the page is retrievable at the claim level.

That is where semantic density matters. A passage that contains a named entity, a precise claim, supporting context, and clean formatting is easier for an LLM to reuse than broad copy with no extractable proof points. Teams working on Google-facing answer surfaces can apply that same principle to AI Overview readiness through this guide on how to optimize for AI Overviews.

Mechanism | Unit retrieved | Winning signal | Failure mode |

|---|---|---|---|

SEO | Full document | Authority and relevance | Page ranks poorly |

AEO | Passage or answer block | Structured clarity and directness | Answer isn't extractable |

GEO | Evidence cluster across sources | Citability and semantic density | Model ignores or paraphrases without attribution |

Back to Chapter 1. Book a call with our strategy team at Algomizer.

The Tactical and Measurement Divergence

Execution diverges long before dashboards do. Teams that say they're "doing SEO, AEO, and GEO" usually mean they're publishing one article and hoping all systems reward it. That is not an operating model.

Operations diverge before reporting does

SEO work is still familiar. Google Search Console, Ahrefs, and SEMrush support ranking analysis, technical diagnosis, and backlink monitoring. Content tends to emphasize topic coverage, internal linking, long-form assets, and page-level optimization.

AEO work shifts toward formatting discipline. Teams engineer clean question headers, concise answer blocks, FAQ schema, short definitional paragraphs, and comparison tables that can be lifted directly into answer interfaces. Editorial quality still matters, but extraction quality matters just as much.

GEO work is more research-intensive. The content needs original framing, fact density, named entities, evidence trails, and passages that an LLM can safely cite or summarize. Measurement is also less mature, so teams often track AI responses manually or with browser-based observation instead of relying on standard web analytics.

Operational test: If the content team can't identify the single sentence, list, or evidence block a machine should reuse, the asset isn't ready for AEO or GEO.

Measurement shifts from visits to presence

Many enterprise programs encounter significant issues. SEO dashboards are optimized for sessions, rankings, and CTR. Those metrics remain valid for the indexed web, but they don't fully capture visibility inside answer engines and generative systems.

The divergence is visible in comparative performance. According to Magneto IT Solutions, GEO delivers 2.8x higher brand recommendation rates in LLMs such as Claude and Gemini. In one e-commerce case on the query Shopify automation tools NY, GEO content with contextual linkages appeared in 68% of AI abstracts, while AEO captured 32% direct answers and SEO drove 12% SERP clicks. This is not a simple ranking story. It is a recommendation and synthesis story.

That changes the KPI stack. For many teams, the more useful questions become:

SEO KPI: Are target pages gaining qualified organic visibility and preserving discoverability in web search?

AEO KPI: Is the brand appearing in direct-answer surfaces for high-intent questions?

GEO KPI: Is the brand being cited, mentioned, and recommended in model-generated responses?

The comparison below makes the split operational.

Tactical & Measurement Comparison: SEO vs. AEO vs. GEO

Discipline | Primary Goal | Core Tactic | Key KPI | Time to Impact |

|---|---|---|---|---|

SEO | Rank documents and drive site visits | Technical health, backlinks, long-form content | Rankings, organic traffic, CTR | Longer horizon |

AEO | Win direct answers in zero-click interfaces | Schema, concise Q&A, extractable structure | Snippet presence, answer capture | Faster horizon |

GEO | Influence AI summaries and recommendations | Fact-dense research, entity consistency, citable passages | Citation frequency, share of AI answer, recommendation presence | Model-dependent horizon |

A mature reporting stack usually pairs legacy SEO tooling with direct observation of AI outputs. Headless browsers are especially useful for repeated prompt testing because they capture visible results across interfaces where APIs don't provide consistent answer-level reporting.

One practical governance rule helps. Assign different owners for page performance and model presence, even when they collaborate on the same asset. The metrics are related, but they are not interchangeable.

Back to Chapter 1. Book a call with our strategy team at Algomizer.

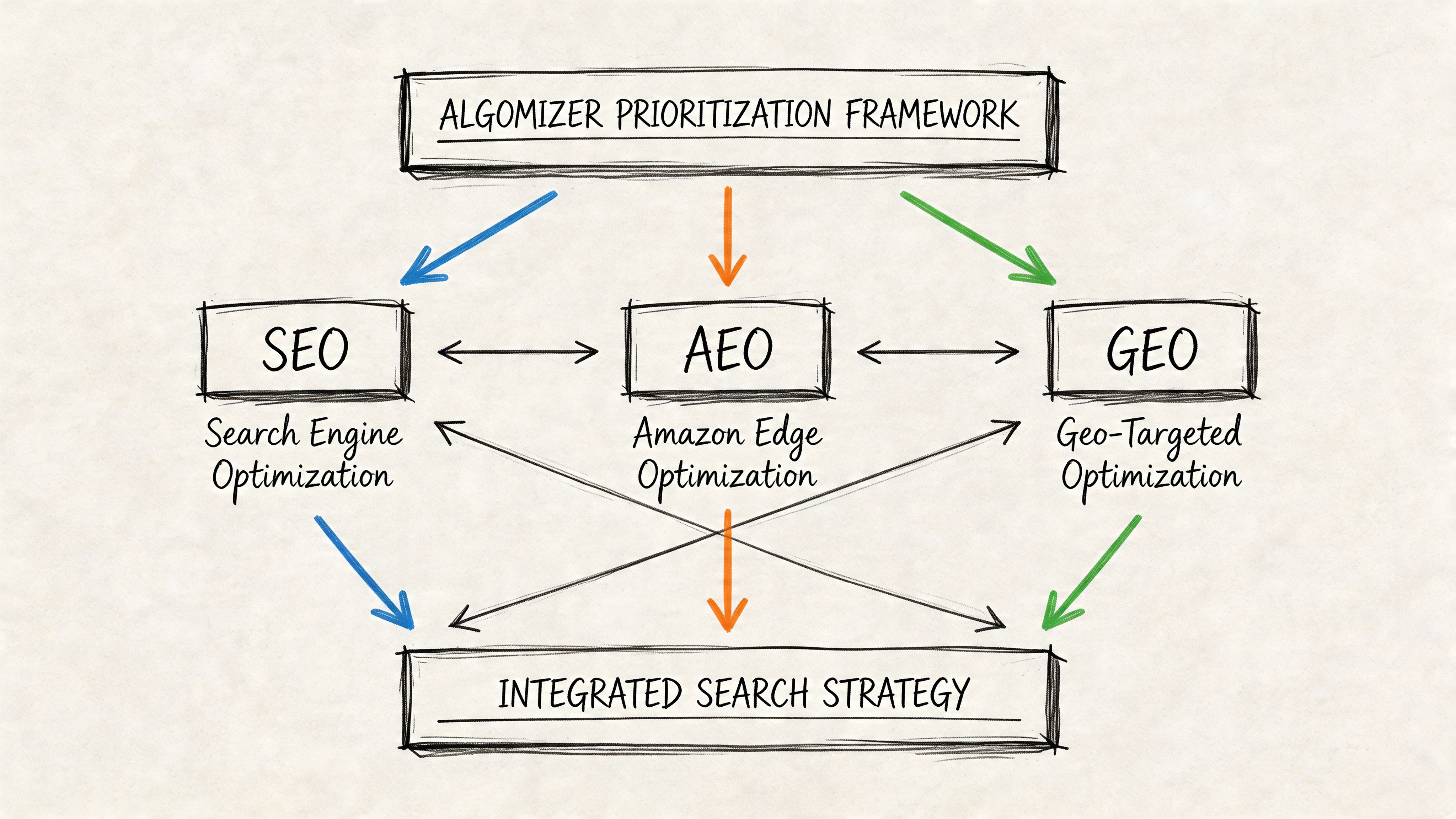

Building Your Integrated Search Strategy

A strong enterprise program doesn't choose between SEO, AEO, and GEO. It allocates them by business objective and market structure. The right question isn't which discipline wins. It's which layer deserves the next marginal dollar.

The visibility stack allocates by business model

The most effective framework is a layered one. SEO is baseline infrastructure. AEO is interface capture. GEO is narrative control. Each layer compounds the others, but not every company should weight them the same way.

For brands in mature categories with strong organic footprints, continued SEO investment protects existing demand capture. For brands facing heavy zero-click pressure, AEO earns presence where users stop at the answer. For brands in high-consideration categories, GEO shapes what buyers hear when they ask AI systems to compare, recommend, or explain.

A practical resource model can be expressed as two common mixes:

Business condition | More suitable emphasis |

|---|---|

Strong existing SEO, rising zero-click pressure, branded demand | 40/30/30 across SEO, AEO, GEO |

Weak search foundation, category education still needed, long sales cycle | 70/20/10 across SEO, AEO, GEO initially |

Those ratios are planning tools, not universal truths. The point is sequencing. SEO builds crawlable and rankable coverage. AEO refines high-intent answer surfaces. GEO pushes the brand into the model's source set for strategic queries.

Priority depends on buying behavior

The clearest way to prioritize is by query type and sales motion.

Choose heavier SEO weighting when buyers still click deep into category research and the site lacks foundational authority.

Choose heavier AEO weighting when branded questions, comparison queries, and support intent generate meaningful commercial value.

Choose heavier GEO weighting when prospects ask open-ended recommendation questions in markets where trust and expertise determine shortlist inclusion.

The strongest evidence for GEO concentration appears in competitive professional services. According to Stackmatix, case data shows GEO services producing 25-35% lead lifts for verticals such as law firms through entity-consistent, fact-dense content that boosts brand mentions in Perplexity and Claude responses. The same source notes that 60% of queries in 2025 ended in AI summaries without clicks. For firms that depend on trust before traffic, that combination changes budget logic.

A long sales cycle increases the value of being remembered inside an AI answer before a buyer ever reaches a website.

Enterprise leaders often miss the strategic inversion. GEO is not a replacement for SEO. GEO is the layer that turns subject-matter authority into machine-retrievable authority. In categories such as legal, financial services, and B2B SaaS, that can shape who gets recommended before the click even becomes possible.

Back to Chapter 1. Book a call with our strategy team at Algomizer.

Winning in the Post-Search Era

The endpoint of this shift is easy to miss if the analysis stays trapped in ranking language. The future isn't just about better search placement. It's about becoming source material for machine-generated judgment.

The destination is source status

SEO still matters because it gives crawlers and retrieval systems access to the brand's knowledge. AEO matters because it packages that knowledge into answer-ready forms. But GEO is where the competitive moat gets built. It determines whether a model treats the brand as a source worth reusing when a user asks for explanation, comparison, or recommendation.

That changes the target from page-one placement to source status. Teams are no longer optimizing only for a list of results. They are optimizing for reuse.

Authority now means machine recall

In the older web model, authority was often inferred through links, page performance, and visibility in SERPs. In the newer model, authority also includes whether systems such as ChatGPT, Gemini, Claude, and Perplexity can retrieve the brand's claims as coherent evidence.

That is why the strategic center of gravity has moved. Enterprises that want durable visibility need content that is not only searchable, but also extractable, citable, and consistent at the entity level. Teams working specifically on LLM visibility can apply that logic directly through tactics outlined in this guide on how to rank in ChatGPT.

The market used to reward the page that ranked. It now also rewards the source a machine trusts enough to summarize.

The most important conclusion is not that SEO is dead. It isn't. The stronger conclusion is that search has become one layer inside a broader synthesis economy. Brands that engineer for citable truth now will own more of the recommendation surface later.

Back to Chapter 1. Book a call with our strategy team at Algomizer.

Algomizer helps brands win visibility inside AI-generated answers across ChatGPT, Claude, Gemini, Perplexity, and other LLM platforms. The team combines AI search strategy, content engineering, technical implementation, and cross-platform visibility tracking to improve citation, recommendation, and answer presence. Brands that need a practical assessment of where they stand can book a call with Algomizer.