Search Marketing Intelligence in the AI Era

Redefine your strategy with AI-first search marketing intelligence. Learn how to shift from SEO to GEO and gain visibility in AI answers from ChatGPT & Gemini.

Search marketing intelligence no longer measures who ranks first. It measures who gets remembered, retrieved, and cited when AI systems answer instead of linking out.

Subtitle: From ranked links to citable evidence

April 2026

Generative Engine Optimization 101 - Chapter 1

Traditional search reporting still rewards position tracking, backlink growth, and page-level visibility. That model broke when AI answer layers began intercepting discovery. Google’s AI Overviews now appear in 9.46% of all desktop searches and reduce organic click-through rates by up to 34.5%, according to Clutch’s 2025 SEO statistics analysis. The implication is larger than a CTR decline. The search surface has changed from a directory of links into a synthesis engine.

The strategic consequence is stark. Search marketing intelligence must move away from monitoring ranked URLs and toward engineering content that AI systems can confidently reuse.

Table of Contents

Executive Summary A New Foundation for Discovery

Traditional metrics describe a market that no longer exists

The new unit of competition is citable evidence

CMOs need a different operating system

Defining Search Marketing Intelligence for AI

Citability replaces visibility as the operating goal

Evidence clusters become the new unit of analysis

Semantic Density changes content economics

The Great Divide Traditional SEO vs AI Intelligence

Google ranking and AI citation run on different selection logic

The Algomizer Framework Engineering Citable Truth

Evidence Clusters organize proof around a claim

Semantic Density determines retrieval value

Retrieval systems reward structure, not ornament

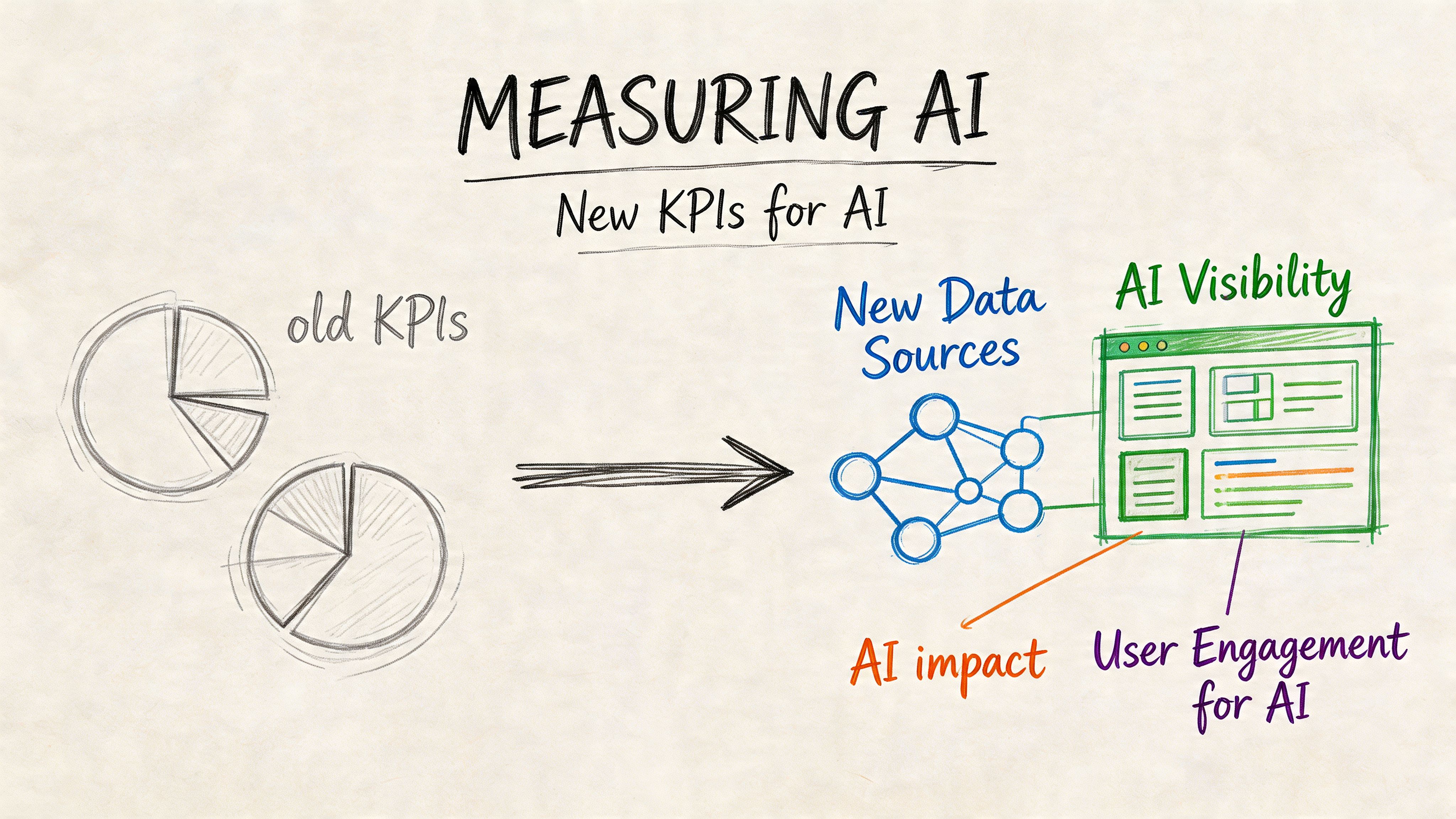

New Data Sources and KPIs for AI Visibility

Legacy analytics miss the answer layer

The KPI stack must measure recall and citation

The data source list is expanding

Implementation Examples and Common Pitfalls

Competitive gap analysis reveals AI citation opportunities

Most teams fail by funding GEO with SEO logic

A better implementation sequence

Conclusion The Mandate for Enterprise Marketing Teams

Enterprise teams need an AI discovery mandate

Executive Summary A New Foundation for Discovery

We are watching search split into two systems. One still ranks links. The other assembles answers.

Traditional metrics describe a market that no longer exists

Executive teams built search reporting around a simple chain of value: impression, click, session, conversion. That model breaks when the discovery event happens inside an AI-generated response and the user never visits the site. The operational question changes with it. We no longer ask only whether a page ranked. We ask whether the brand supplied evidence the model could retrieve, trust, and repeat.

This shift is larger than a Google interface update. Buyers now use answer engines to compress research, compare vendors, and validate claims before they ever open a results page. Teams that already study adjacent disciplines such as AI marketing analytics recognize the gap quickly. Web analytics records site behavior. It does not explain answer-layer inclusion, citation frequency, or why one source survives synthesis while another disappears.

The new unit of competition is citable evidence

Search marketing intelligence has to move from page performance to evidence performance.

That means the object we optimize is no longer just a URL. It is a claim plus the proof around it: original data, precise definitions, methodology, examples, comparisons, and supporting context that an AI system can reuse without distortion. This is the operating logic behind modern GEO and related practices such as LLMO for AI search visibility.

The strategic implication is direct. AI discovery is its own channel with its own retrieval mechanics, trust signals, and failure modes. A category page can rank well and still lose the commercial moment if a model cites a competitor’s benchmark, review corpus, or clearer explanation instead.

CMOs need a different operating system

The budget question is no longer "how do we get more ranked pages?" It is "how do we make our best claims the easiest claims for models to cite?"

We recommend three changes.

Measure answer-layer presence, not just SERP position. Ranking reports miss whether AI systems reused the brand’s evidence.

Fund evidence engineering, not volume publishing. More pages do not solve a proof deficit.

Treat AI discovery as a distinct demand channel. It requires its own content design, monitoring, and governance model.

The risk is strategic. Brands that keep using SEO-era assumptions will continue reporting stable organic performance while losing influence inside the interfaces buyers increasingly trust to make shortlists.

Defining Search Marketing Intelligence for AI

Search marketing intelligence for AI is the practice of measuring and influencing whether a brand’s evidence gets retrieved, synthesized, and cited inside answer engines.

Citability replaces visibility as the operating goal

The old objective was visible ranking. The new objective is authoritative reuse.

That distinction forces a different analytical model. AI systems don’t only reward the loudest domain or the broadest backlink profile. They look for content patterns that can survive retrieval and synthesis. In practice, that means detailed passages, direct answers, comparisons, concrete examples, and claims that can be corroborated.

This is why many conventional dashboards understate the underlying problem. They show impressions, clicks, and average position, but they don’t show whether an AI system extracted the brand’s evidence at all. Teams that want a wider measurement framework often explore adjacent disciplines such as AI marketing analytics, because classic SEO reporting can’t fully describe model-mediated discovery.

Evidence clusters become the new unit of analysis

The useful object is no longer the standalone web page. It is the evidence cluster: the set of passages, supporting assets, comparisons, and corroborating mentions that collectively make a claim easy to retrieve and safe to cite.

A practical search marketing intelligence stack for AI evaluates questions such as these:

Does the content answer a specific query cleanly? Ambiguous messaging performs poorly in retrieval systems.

Does the page contain proof, not just positioning? Marketing language without support doesn’t translate well into AI answers.

Can the claim be reinforced elsewhere? Repeated, consistent evidence improves citation confidence.

Is the information modular? Dense paragraphs often fail where well-structured sections succeed.

Practical rule: If a human reader has to infer the claim, an LLM usually won’t cite it.

That’s also why language model optimization deserves its own category. Teams that need a clearer baseline on that distinction can review what LLMO means in practice, then compare it against page-ranking assumptions inherited from SEO.

Semantic Density changes content economics

Keyword density was always a crude proxy. Semantic Density is the stronger lens. It measures how much verifiable, query-relevant substance a piece contains relative to its noise.

A semantically dense passage does three jobs at once. It states the claim. It provides enough surrounding detail to clarify meaning. It reduces ambiguity for retrieval systems.

That changes budget logic. A large archive of thin pages can look productive in a CMS and still produce weak AI visibility. A smaller body of highly structured, evidence-rich content can outperform it in the answer layer because it gives retrieval systems something usable.

The Great Divide Traditional SEO vs AI Intelligence

Traditional SEO is built for ranked links. AI search intelligence is built for cited evidence. We treat that difference as operational, not semantic, because AI discovery does not behave like a thinner version of Google. It behaves like a separate channel with its own retrieval logic, answer formats, and failure modes.

The evidence is clear. According to eMarketer’s analysis of AI citation patterns, fewer than 10% of sources cited in ChatGPT, Gemini, and Copilot rank in Google’s top 10 organic results for the same query. CMOs should read that as a channel separation signal. Strong page rankings can coexist with weak AI presence.

That gap changes what we optimize for. SEO rewards page-level relevance, authority, and technical accessibility. GEO rewards whether a model can extract, verify, and reuse a claim with low ambiguity. Teams that still collapse these models into one workflow usually overinvest in rankings and underinvest in evidence design. For a clearer comparison of those operating models, see our breakdown of AEO vs SEO vs GEO.

A useful parallel appears in RepurposeMyWebinar B2B video SEO. The underlying lesson is not about video alone. Discovery changes when the surface changes. In AI search, format discipline, explicit claims, and supporting proof often matter more than traditional rank signals because the model is selecting reusable passages, rather than ordering blue links.

Google ranking and AI citation run on different selection logic

Dimension | Traditional SEO Intelligence | AI Search Intelligence (GEO) |

|---|---|---|

Primary goal | Rank pages | Earn citations and inclusion in answers |

Core object | URL | Passage, claim, and evidence cluster |

Success signal | Position and traffic | Recall, citation, and answer presence |

Content priority | Topic coverage | Verifiable specificity |

Optimization logic | Relevance plus authority signals | Retrieval fit plus corroboration |

Measurement method | Ranking tools and Search Console data | Headless-browser observation and answer capture |

Failure mode | Low rankings | Missing from answers despite strong rankings |

Strategic risk | Traffic loss to competitors | Brand exclusion from the answer layer |

The table matters because it exposes a management error. Many enterprise teams still assign AI visibility to the SEO function without changing the unit of analysis. That keeps reporting focused on URLs and impressions even when the system making the decision is evaluating claims and support. The result is predictable. Brands publish more content, see limited citation lift, and misread the problem as an authority gap.

We recommend a different operating model.

Editorial teams write for extraction. Each important claim should be stated directly, supported immediately, and phrased consistently across assets.

Analysts measure answer inclusion, citation frequency, and claim recall across AI systems, not rankings alone.

Engineering teams structure pages so claims, definitions, comparisons, and proof are easy to parse at the passage level.

Brand teams enforce message consistency across site content, documentation, third-party mentions, and executive commentary.

The strategic divide is straightforward. SEO asks whether a page can win a place in a list. AI intelligence asks whether a model trusts our evidence enough to reuse it. In the answer layer, that second question decides whether the brand is visible at all.

The Algomizer Framework Engineering Citable Truth

Citable truth is engineered, not hoped for. The effective model combines structured proof with language patterns that retrieval systems can reliably decompose.

Evidence Clusters organize proof around a claim

An Evidence Cluster is a structured collection of assets that verify one important statement about a company, product, or category position. The cluster can include a comparison page, a documentation segment, a founder or expert quote published in a credible context, a use-case explainer, and a supporting FAQ.

The key is coherence. Every asset in the cluster reinforces the same claim without drifting into vague marketing language. When AI systems encounter that repetition across contexts, the claim becomes easier to retrieve and harder to ignore.

A strong cluster usually includes:

A canonical claim page that states the point directly.

Supporting explanation that adds definitions, use cases, and distinctions.

Comparative framing that places the claim against alternatives.

Corroborating mentions that echo the same language outside the primary page.

This is different from content marketing volume plays. The objective isn’t to publish more. The objective is to remove doubt.

Semantic Density determines retrieval value

Semantic Density describes the ratio of useful, unambiguous evidence to filler. High-density content reduces the amount of interpretive work the model has to perform.

That’s a mechanical advantage. Retrieval systems prefer passages that already contain the answer shape the user requested. If the prompt asks for a comparison, the best candidate text often contains explicit comparison language. If the prompt asks for a recommendation, the best candidate text often combines criteria, differentiation, and proof in the same local context.

A working GEO process therefore prioritizes:

Claim compression. Put the core point in plain language near the top of a relevant section.

Proof adjacency. Place supporting detail close to the claim rather than scattering it.

Terminology discipline. Keep important labels consistent across pages and assets.

Modular formatting. Use headings, lists, and compact sections that can be lifted cleanly.

Dense content doesn’t mean long content. It means a passage carries enough evidence to stand on its own.

Retrieval systems reward structure, not ornament

Most legacy SEO copy still over-explains and under-proves. It spends too much space signaling relevance and too little space supplying usable evidence.

That’s why the framework treats AI optimization as an information architecture problem. The win condition is a content system that turns important brand truths into machine-friendly retrieval targets.

New Data Sources and KPIs for AI Visibility

AI visibility requires new measurement because standard search dashboards can’t see most answer-layer behavior.

Global spending on search advertising is projected to reach $351.55 billion in 2026, while Google still holds 89.74% of traditional search and AI referral traffic has surged about 9.7x since 2024, according to eSearch Logix’s digital marketing statistics roundup. That makes the measurement problem urgent. Teams are allocating budget inside a search economy that is still dominated by Google but increasingly influenced by AI intermediaries.

Legacy analytics miss the answer layer

Google Search Console, GA4, Google Ads, and common SEO suites remain useful, but they don’t directly reveal how a brand appears across ChatGPT, Gemini, Perplexity, or AI Overviews in live user contexts.

That blind spot creates false confidence. A team can see stable rankings and healthy paid performance while losing the first recommendation moment inside AI interfaces. Search marketing intelligence must therefore inspect rendered outputs, not just platform-reported aggregates.

When organizations need to convert unstructured assets into analyzable evidence, tools outside the classic SEO stack can help. Teams handling technical reports, whitepapers, and source documents often use workflows similar to PDF AI’s extraction process to turn buried information into reusable, indexable content components.

The KPI stack must measure recall and citation

The most useful AI-era KPIs are operational, not vanity metrics.

KPI | What it measures | Why it matters |

|---|---|---|

Citation Frequency | How often the brand is cited across answer engines | Indicates reusable authority |

Share of AI Voice | How often the brand appears relative to competitors | Shows category presence in generated discovery |

Answer Snippet Accuracy | Whether brand facts are represented correctly | Protects perception and conversion quality |

Knowledge Base Penetration | How deeply brand claims appear across prompts and entities | Reveals whether core messages are retrievable |

These KPIs require direct observation. Headless browsers such as Playwright or Puppeteer can simulate real sessions, render dynamic interfaces, capture answer outputs, and preserve evidence for review. That approach is more faithful to what buyers see.

A practical demonstration of how AI visibility is evaluated in live environments appears below.

The data source list is expanding

The modern source map for search marketing intelligence includes more than search consoles and ad dashboards. It also includes:

Rendered AI answers across platforms

Prompt-level brand comparisons

Citation source capture

Knowledge consistency checks across repeated queries

CMOs don’t need more dashboards. They need a reporting layer that reflects how AI-mediated discovery happens.

Implementation Examples and Common Pitfalls

The practical path starts with competitive gaps. AI systems often cite the brand that explains a contested buying decision most clearly.

Competitive gap analysis reveals AI citation opportunities

Effective competitive intelligence in search can drive 25-40% ROAS improvements, and brands that dominate competitor gaps in traditional SERPs are cited 65% more often in AI responses, according to Wildnet Technologies’ search intelligence analysis. The significance isn’t limited to paid efficiency. It shows that competitor gap ownership carries forward into AI recall.

A B2B SaaS team applying this logic wouldn’t begin by publishing another generic “what is” article. It would inspect comparison prompts, implementation questions, pricing objections, migration concerns, and category alternatives. The goal is to identify where rivals leave factual gaps or rely on thin messaging.

A focused implementation model looks like this:

Comparison prompts first. Queries that ask “best,” “vs,” or “alternative” often force the model to choose.

Evidence before opinion. Product claims need surrounding proof, not slogans.

Cross-functional review. Product marketing, sales, and content teams should validate that the answer mirrors real buyer objections.

For teams refining that process around answer engines specifically, guidance on how to rank in ChatGPT helps clarify what strong source selection behavior looks like in practice.

Most teams fail by funding GEO with SEO logic

The common mistakes are structural.

First, teams re-label the SEO budget as an AI budget without changing deliverables. They keep publishing broad educational pages and expect citation gains. That rarely works because AI systems reward specificity and proof shape, not legacy volume habits.

Second, teams over-trust old dashboards. Rankings and impressions can remain stable while AI answers exclude the brand from important recommendation moments.

Third, teams isolate ownership. GEO can’t sit only with the SEO team. It needs editorial discipline, technical support, product accuracy, and active competitive analysis.

The expensive mistake isn’t underinvesting in AI discovery. It’s investing with the wrong operating model.

A better implementation sequence

A stronger sequence is operationally simple:

Audit the prompts that matter across research, comparison, and decision stages.

Identify where the current brand narrative lacks direct, citable proof.

Build evidence clusters around the highest-value claims.

Measure answer outputs repeatedly and refine the weak passages.

That process turns search marketing intelligence into a decision system instead of a reporting ritual.

Conclusion The Mandate for Enterprise Marketing Teams

Enterprise marketing teams need a new mandate. They must treat AI discovery as a primary surface for brand visibility, not as a side effect of SEO.

Enterprise teams need an AI discovery mandate

The core lesson is now clear. Search marketing intelligence can’t stop at ranked links because buyers increasingly encounter synthesized answers before they ever evaluate a results page. When that happens, the deciding asset isn’t page authority alone. It is citable evidence.

For CMOs, the immediate actions are straightforward:

Audit current AI visibility. Review how the brand appears, or fails to appear, across major answer engines.

Reallocate a pilot budget. Move part of legacy SEO spend into a dedicated GEO initiative with clear citation goals.

Modernize measurement. Use a stack that captures rendered outputs, source citations, and answer accuracy.

This shift is bigger than channel optimization. It is business continuity for digital discovery. The organizations that adapt will shape the answers buyers receive. The organizations that don’t will keep optimizing pages that fewer prospects ever reach.

,

[Chapter 1 matters because it establishes the new rules of AI discovery. Teams that want a clearer baseline before building an execution plan can revisit the logic above, then book a complimentary visibility assessment with Algomizer to see how their brand appears across AI-generated answers and where the highest-impact citation opportunities sit.]