AI Agents for SEO: 2026 Enterprise Strategy Guide

Explore how AI agents for SEO are reshaping enterprise strategy in 2026. Learn about agent architectures, use cases, and comparisons to managed GEO services.

The Role of Autonomous Systems in Generative Engine Optimization

May, 2026

The surprise isn't that ai agents for seo work. The surprise is that their strongest results come from teams that stop treating them as strategy. According to 2025 AI SEO statistics, 67% of marketers report improved content quality, AI boosts search rankings by 49.2%, and AI-driven traffic surged 527% from January to May 2025. Those numbers don't validate blind automation. They validate directed automation.

Legacy SEO framed software as an assistant to human workflow. The new reality is harsher. Search discovery now happens inside systems such as ChatGPT, Gemini, and Perplexity, where retrieval, synthesis, and citation behavior reshape what visibility means. In that environment, ai agents for seo are execution engines that can move faster than human teams across content, on-page optimization, and technical maintenance. They still don't define market narrative, competitive positioning, or source authority on their own.

That distinction matters at enterprise scale. A tool can rewrite headings. A tool can update metadata. A tool can trigger a site audit. A tool can't decide which claims deserve reinforcement across owned, earned, and structured content so that language models recognize the brand as the best answer. That is a strategic function.

Table of Contents

Executive Summary AI Agents Are Tactical Tools Not Strategic Solutions

AI agents create operational efficiency, not judgment

The more pressing enterprise question is scope

Deconstructing Agentic SEO Architectures

Agentic SEO is a system, not a chatbot

Automated Insight Synthesis explains why agents outperform scripts

Where the architecture breaks

Mapping Agent Capabilities to High-Value SEO Outcomes

Content velocity becomes operational

On-page maintenance stops consuming senior talent

AI answer visibility improves when agents enforce coverage

A Phased Roadmap for Enterprise Agent Adoption

Phase one starts with controlled surfaces

Phase two operationalizes technical autonomy

Phase three moves from tooling to governance

AI Agents vs Managed GEO Services A Side-by-Side Analysis

The decision depends on the problem being solved

Comparison of AI Agents vs. Managed GEO Service

The New Mandate For AI-Native Discovery

Discovery now depends on machine legibility

Execution software still needs strategic ownership

Executive Summary AI Agents Are Tactical Tools Not Strategic Solutions

AI agents create operational efficiency, not judgment

AI agents for seo are already useful. They compress production cycles, enforce consistency across large page sets, and reduce the volume of manual optimization work that once absorbed senior team capacity. Enterprise buyers should adopt them for those reasons.

The strategic mistake is broader than overestimating automation. Many organizations confuse faster execution with stronger search positioning. Those are different outcomes. An agent can improve throughput. It cannot determine which narratives deserve to exist, which claims are credible enough to earn citation, or which content structures strengthen authority across AI search systems.

That distinction matters more now because the market is standardizing around AI-assisted execution. As noted earlier, widespread adoption has already made basic automation common. Once competitors can generate acceptable drafts, refresh metadata, and maintain page hygiene with similar tooling, durable advantage shifts to orchestration, governance, and authority design.

Practical rule: If an AI agent can execute the same workflow for every competitor, that workflow will not produce lasting differentiation.

The more pressing enterprise question is scope

The enterprise decision is not whether agents should run the entire search function. It is which parts of the search stack are structured enough to automate without introducing brand, compliance, or visibility risk.

That scope is narrower than vendor messaging implies. Agents perform well in environments with stable rules, clear formatting standards, and large volumes of repeatable work. They perform less reliably where success depends on editorial judgment, competitive interpretation, or cross-channel narrative control. Leaders who separate those layers get measurable efficiency. Leaders who collapse them into one system usually get more output, but not better market position.

Teams evaluating long-term fit should also understand how large language models work, because an agent inherits the same constraints around reasoning consistency, retrieval quality, and context handling. Better orchestration improves execution. It does not eliminate model limitations.

Strategic layer | AI agents for seo | Human strategic function |

|---|---|---|

Execution speed | Strong | Moderate |

Cross-page consistency | Strong | Moderate |

Narrative positioning | Weak | Strong |

Competitive reframing | Weak | Strong |

Authority engineering for LLM citation | Partial | Strong |

This is the point many SEO programs miss. AI agents belong in the execution layer. A managed GEO service addresses the larger system: entity clarity, citation probability, answer-surface coverage, governance, and ongoing adaptation to AI-native discovery environments. Agents are a tool inside that stack, not the strategy itself.

The market rewards machine-readable authority, not automation in isolation.

Deconstructing Agentic SEO Architectures

Agentic SEO is a system, not a chatbot

An SEO agent isn't a single model answering prompts. It is an orchestrated architecture that plans, calls tools, stores context, evaluates outputs, and loops until a task is complete. That mechanical distinction explains the current performance gap between basic AI assistants and modern agentic platforms.

Modern vendors describe a pipeline, not a prompt box. According to Frase research on ai agents for seo, these systems can automate 80–90% of the content lifecycle and reduce manual workflow steps by 50–70% for content production and on-page optimization. The practical result is compression from weeks to hours for workflows that used to bounce between strategists, writers, editors, SEO specialists, and CMS managers.

For leaders who need a technical foundation before evaluating vendors, this explanation of how large language models work is useful because it clarifies why reasoning quality, memory, and retrieval behavior shape agent performance more than raw model branding.

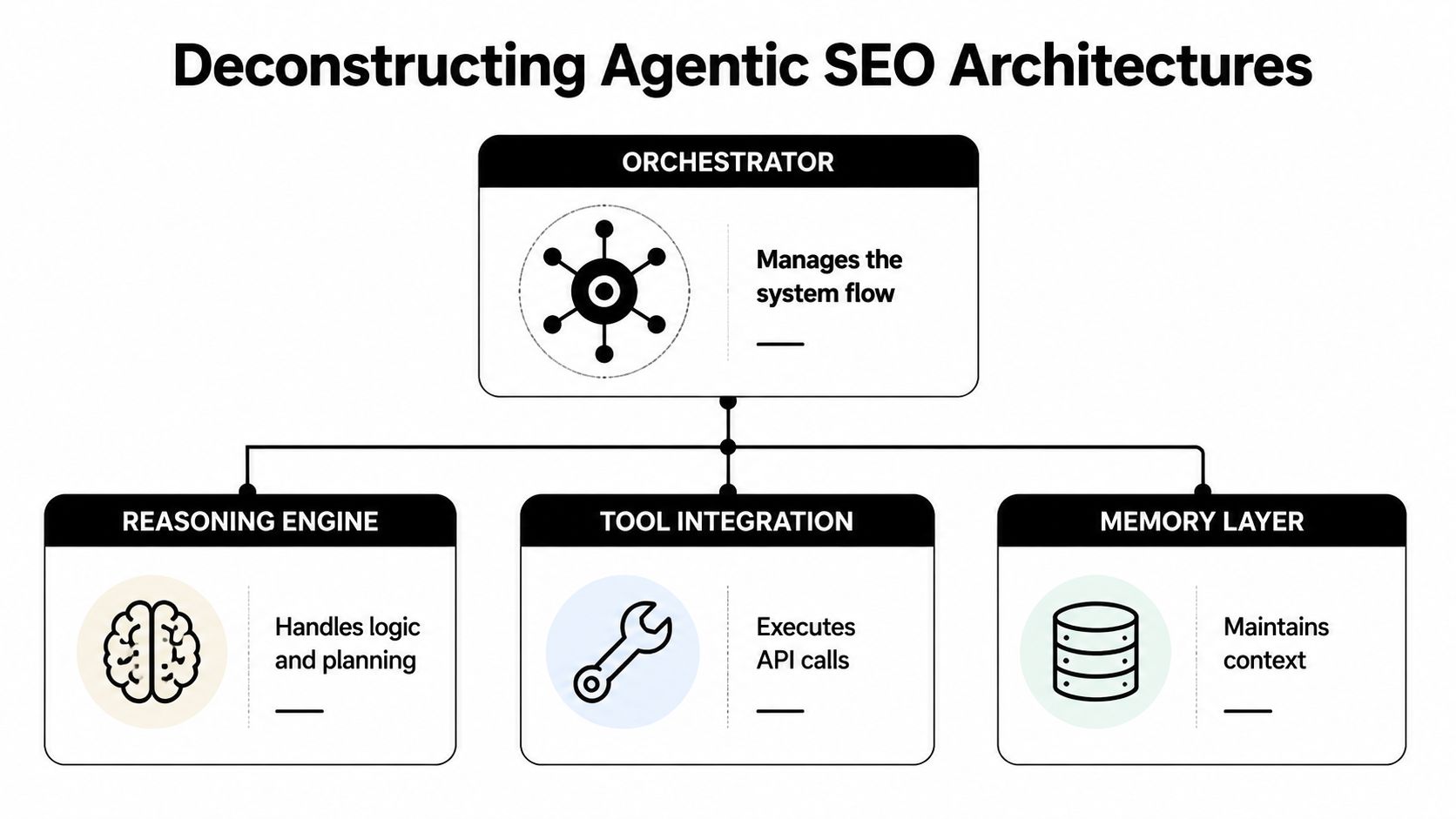

A working architecture usually contains four components:

Orchestrator. This layer decides sequence. It determines whether the system should begin with SERP analysis, entity extraction, draft generation, or a technical crawl.

Reasoning engine. This is the decision core. It interprets goals, compares options, and selects next actions.

Tool integration. APIs, crawlers, keyword datasets, CMS connectors, and analytics endpoints turn reasoning into action.

Memory layer. Stored context preserves page history, prior recommendations, brand rules, and content state across sessions.

A useful visual reference sits below.

Automated Insight Synthesis explains why agents outperform scripts

The old automation model followed fixed rules. If title length exceeds threshold, shorten it. If H1 is missing, add it. That approach still matters, but it doesn't explain agentic gains.

A more accurate model is Automated Insight Synthesis, a research framing for how agents merge multiple evidence streams into one decision path. The system doesn't merely detect one issue. It synthesizes relationships among ranking pages, internal content gaps, entity coverage, search intent patterns, and publishing constraints.

That synthesis changes output quality in three ways:

It selects from competing priorities. A page may need schema work, internal links, and content expansion. The agent can rank those interventions.

It reasons across dependencies. Content updates can trigger metadata changes, which can trigger link redistribution, which can trigger republishing.

It maintains continuity. The same page isn't treated as a blank slate every time the workflow runs.

The strongest ai agents for seo don't just produce drafts. They preserve state, compare alternatives, and act through connected tools.

Where the architecture breaks

Enterprise buyers should inspect failure modes before inspecting dashboards. Agentic systems are vulnerable when the memory layer stores weak assumptions, when the orchestrator lacks business context, or when tool access outruns governance.

Three limitations show up repeatedly:

Context decay: An agent can retain stale assumptions about priority pages or market positioning.

False optimization: A system can improve a local metric while harming broader authority or conversion intent.

Tool overreach: A live editor or CMS connection can push changes faster than internal review processes can validate them.

That is why ai agents for seo belong in a supervised architecture. Their advantage is mechanical throughput. Their risk is executing the wrong objective with perfect consistency.

Mapping Agent Capabilities to High-Value SEO Outcomes

Content velocity becomes operational

Agents increase output quality at scale when they handle recurring analysis, enforce content standards, and keep optimization continuous across large page inventories.

The clearest operational example comes from Bika's analysis of ai agents for seo. A solo marketer can configure automated scanning of 100 pages weekly, with agents updating meta tags, optimizing headings, and checking internal links, which reduces manual work by 60%. The same source notes that 65% of SEO professionals report better results when incorporating AI tools.

Most enterprise bottlenecks aren't caused by lack of ideas; they're caused by unresolved backlog. Teams know which pages need attention, but they can't process them fast enough. AI agents remove that bottleneck by turning page review into a standing operational loop instead of a quarterly project.

On-page maintenance stops consuming senior talent

Agents provide economic advantage when they absorb repetitive optimization work that otherwise crowds out strategic analysis.

Examples include:

Bulk metadata revision: Title tags and descriptions can be updated across dozens of pages with rule-based consistency.

Heading normalization: Agents can align page structure to target intent without waiting for manual copy reviews.

Internal link hygiene: Crawl paths and contextual linking can be checked continuously rather than during audit bursts.

Competitor monitoring: Ranking movement and content shifts can trigger action queues automatically.

A helpful industry resource for teams comparing workflow options is this guide to best AI tools for local SEO, especially for organizations managing distributed location pages and local discovery surfaces.

Senior SEO talent shouldn't spend the week repairing metadata patterns that software can detect and remediate.

AI answer visibility improves when agents enforce coverage

The overlooked value of ai agents for seo isn't only SERP efficiency. It's coverage discipline. Language models cite sources that are legible, internally coherent, and semantically complete. Agents help enforce those conditions across page sets.

That doesn't mean an agent guarantees citation. It means the system can improve the preconditions for citation by standardizing entities, headings, metadata, internal links, and supporting topic coverage. Enterprises that want to adapt classic optimization to AI answer environments should study this analysis of optimizing for AI Overviews, because the core shift is from keyword insertion to retrieval readiness.

A concise capability map makes the division clearer:

Outcome | What agents do well | What still needs human direction |

|---|---|---|

Faster publishing | Build drafts, outlines, update cycles | Decide narrative angle |

Better on-page consistency | Standardize tags, headings, links | Protect brand nuance |

Expanded topic coverage | Detect gaps and suggest additions | Prioritize market-facing themes |

AI answer readiness | Improve machine legibility | Design authority strategy |

The enterprise lesson is straightforward. Agents increase the amount of work a strong SEO team can complete. They don't decide which truths the market should associate with the brand.

A Phased Roadmap for Enterprise Agent Adoption

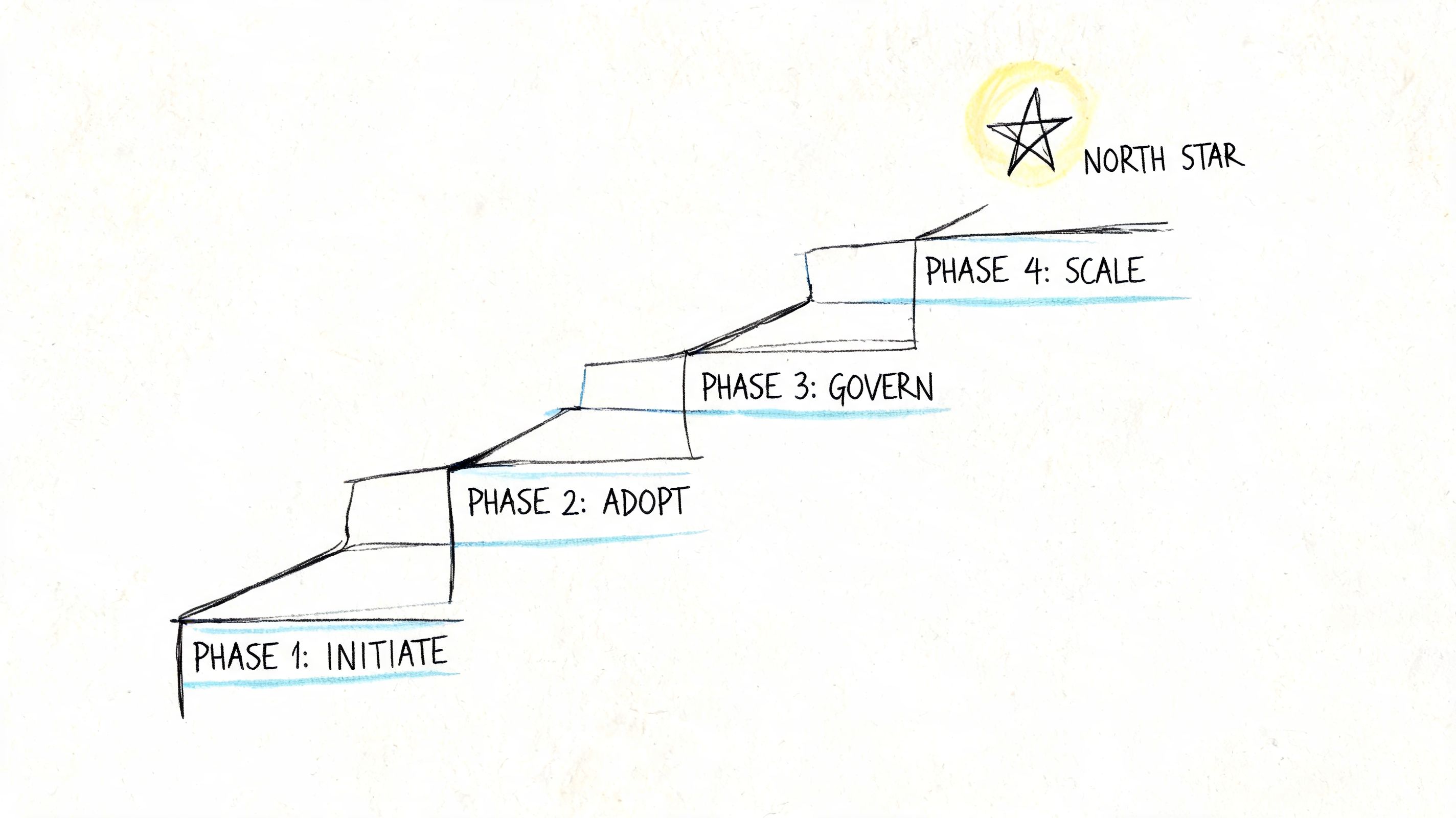

Phase one starts with controlled surfaces

Enterprise adoption works when organizations begin with bounded workflows, strong review rights, and limited publishing authority.

The first deployment target should be low-risk, high-repeatability work. Metadata correction, internal link validation, heading refinement, and content inventory analysis are ideal because they expose teams to agent behavior without handing strategic control to the system.

This phase needs governance more than ambition. Teams should define who approves changes, which environments agents can access, and which pages are excluded. The central question isn't whether the model can act. It's whether the organization can verify what it changed and why.

Phase two operationalizes technical autonomy

The largest enterprise benefit appears when agents move into technical remediation with controlled deployment paths.

According to ALM Corp's review of advanced AI SEO agents, tools such as Alli AI can perform autonomous technical audits and deploy fixes, with reported 40–60% reductions in crawl errors and 15–30% improvements in Core Web Vitals within 4–8 weeks. That matters because developer queues are often the hidden constraint in enterprise SEO, especially across large CMS estates and approval-heavy release cycles.

Technical autonomy doesn't mean unrestricted autonomy. It means the agent can diagnose, prepare, and in some cases apply fixes through live editors or code snippets while human operators retain policy control.

A phased operational model works well:

Controlled audit mode: The agent only detects and recommends.

Approved deployment mode: The agent can push pre-approved classes of fixes.

Exception handling mode: High-risk templates, regulated pages, and sensitive content stay manual.

Governance isn't friction. Governance is what makes automation durable inside enterprise systems.

Phase three moves from tooling to governance

Most adoption programs stall when they focus on software selection and ignore operating model design. The technology isn't the hard part. The hard part is assigning accountability across SEO, content, engineering, legal, and analytics.

A mature roadmap includes three policy layers:

Policy layer | Enterprise requirement |

|---|---|

Access control | Limit CMS, analytics, and code permissions by workflow type |

Review model | Separate auto-approved actions from mandatory human review |

Measurement model | Track output quality, remediation speed, and visibility impact |

The strongest programs also formalize prompt libraries, brand rules, source handling standards, and rollback procedures. Without those controls, an organization doesn't have an agent program. It has a scaling risk.

Phase four is where many teams think they begin. They don't. Cross-channel orchestration only works after teams have proven reliability, auditability, and role clarity on contained workflows first.

AI Agents vs Managed GEO Services A Side-by-Side Analysis

The decision depends on the problem being solved

AI agents are the right answer when the organization needs faster execution against known tasks. A managed GEO service is the right answer when the organization needs strategic adaptation to AI-native discovery environments.

That distinction becomes more important as visibility shifts from rankings alone to inclusion inside synthesized answers. Agents are strong when the goal is operational scale. They are weaker when the goal is discovering which prompts, entities, narrative patterns, and source structures influence citation behavior across multiple LLM ecosystems.

For teams evaluating external support models, this perspective on choosing an LLM SEO agency is useful because it separates tooling capability from strategic accountability.

Comparison of AI Agents vs. Managed GEO Service

Criterion | DIY AI Agents | Managed GEO Service (Algomizer) |

|---|---|---|

Strategic oversight | Internal team must define objectives and interpret model shifts | External partner owns strategy adaptation and execution direction |

Accountability | Tool outputs are only as good as internal supervision | Service model is accountable for ongoing visibility outcomes |

Speed to task completion | High for repetitive workflows | High for execution plus strategy changes |

Adaptability to model updates | Depends on vendor releases and internal monitoring | Managed continuously as platforms and answer patterns evolve |

Technical execution | Strong for audits, metadata, internal links, and CMS-connected changes | Strong when paired with strategic prioritization and broader authority work |

Authority engineering | Limited to what the workflow has been told to optimize | Built around citation, recall, entity shaping, and AI answer inclusion |

Best fit | Mature internal teams with clear SEO operations | Leaders who need enterprise-scale AI visibility without building the full stack internally |

The strategic gap isn't intelligence in the abstract. It's ownership of the objective. AI agents don't independently decide how a legal brand should present expertise across search, model citations, and entity relationships. They execute against provided logic. A managed GEO function exists to generate, test, and refine that logic continuously.

That is why the choice isn't binary. Many enterprises will use both. The agent layer handles throughput. The managed layer handles adaptation, signal design, and cross-platform visibility engineering.

The New Mandate For AI-Native Discovery

Discovery now depends on machine legibility

The center of gravity has shifted. Search visibility no longer ends at ranking pages on Google. Brands now need content systems, technical structures, and authority signals that machines can parse, compare, and cite across answer engines.

That change alters what good SEO looks like. A page isn't only competing for clicks. It is competing to become a trusted retrieval object inside a synthesis engine. The implication is severe for enterprise teams. Publishing more content isn't enough. Machines need structured clarity, semantic consistency, and evidence patterns they can reliably interpret.

Organizations exploring their own discovery surfaces should also examine what a custom search engine for website reveals about query intent, retrieval behavior, and the growing overlap between site search and AI-assisted navigation.

Execution software still needs strategic ownership

AI agents for SEO are now part of the modern search stack. That conclusion is settled. They automate repetitive work, increase throughput, and improve consistency. Used correctly, they give lean teams an advantage across content, on-page optimization, and technical remediation.

They are still tools. They don't establish authority by themselves. They don't decide which evidence clusters should define a brand in LLM outputs. They don't own the political work of aligning marketing, technical SEO, analytics, editorial governance, and competitive response into one coherent AI visibility system.

The new mandate is sharper than legacy SEO ever required. Brands must engineer discovery across both search engines and language models. That requires execution software, but it also requires a strategic function that understands how machine reasoning, retrieval, and citation work.

The brands that win won't be the ones with the most automation. They'll be the ones with the clearest strategy for turning automation into machine-recognized authority.

Algomizer helps brands win visibility inside AI-generated answers across ChatGPT, Claude, Gemini, and Perplexity with a fully managed GEO approach that goes beyond tooling. Teams that need a strategic visibility assessment, not another dashboard, can book a call with Algomizer.