10 Best Copy AI Alternatives for 2026

Searching for Copy AI alternatives? Our research analyzes 10 top tools for enterprise, marketing, and GEO use cases based on how AI models actually work.

Executive summary

AI writing platforms no longer compete on speed alone. They compete on output control. Through the Algomizer lens of Output Philosophy, the useful question is not which tool writes more templates. The useful question is which system preserves the right constraint under production pressure: brand governance, conversion performance, or Generative Engine Optimization.

That framing changes how Copy.ai alternatives should be evaluated. A platform built for governance will prioritize consistency, approvals, and policy compliance. A platform built for conversion optimization will treat copy as a probabilistic performance asset and score variants before distribution. A platform built for GEO will structure content for retrieval, citation, and answer-surface visibility in AI systems. Teams trying to rank in ChatGPT results with GEO-focused content systems need that third category in particular.

Copy.ai still functions as a broad baseline, but the category has matured past general-purpose drafting. The stronger alternatives now differentiate at the systems layer: workflow controls, optimization logic, retrieval alignment, and content operating model fit. That is also why market-facing comparisons often flatten meaningful differences. A procurement team choosing between Jasper, Anyword, Writer, or Frase is not selecting between similar generators. It is selecting between competing theories of how machine-written language should behave inside a business.

This chapter uses that distinction to evaluate ten alternatives by strategic fit rather than feature volume. Jasper, for example, is widely positioned as a premium brand-content platform, and the Flaex.ai Jasper page reflects that market posture. The underlying selection logic is straightforward. If your primary failure mode is off-brand output, choose governance. If your failure mode is weak conversion lift, choose predictive optimization. If your failure mode is invisibility in AI answer engines, choose GEO-native architecture.

Template count and interface polish matter less than Semantic Density under revision, stakeholder load, and channel expansion.

That is the standard used throughout this chapter.

Table of Contents

1. Jasper

Jasper is a governance engine first

2. Anyword

Anyword is optimized for pre-launch message selection

3. Writesonic

Writesonic is engineered for retrieval performance

4. Writer enterprise

Writer is optimized for low-variance generation

5. Hypotenuse

Hypotenuse is tuned for catalog-scale content systems

6. Rytr

Rytr is optimized for drafting velocity, not operational maturity

7. Scalenut

Scalenut is built for retrieval-oriented content operations

8. Frase

Frase prioritizes research-conditioned output

9. Surfer

Surfer is built for optimization discipline, not writing breadth

10. Content at Scale

Content at Scale is built for industrial long-form throughput

Top 10 Copy.ai Alternatives Comparison

Final Thoughts

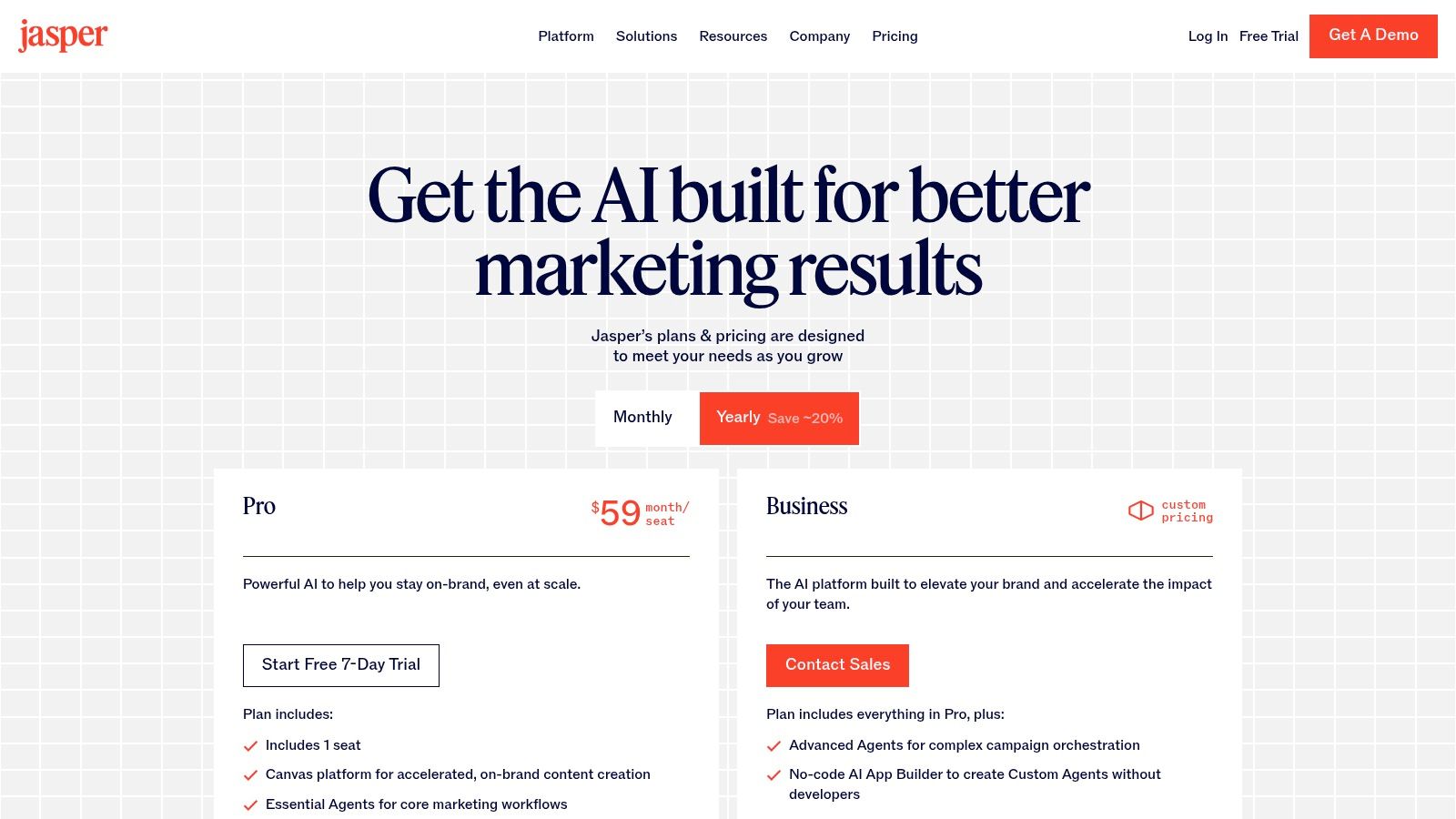

1. Jasper

Jasper is what teams buy after they learn that faster generation does not fix inconsistent messaging. Through the Algomizer lens, its Output Philosophy is brand governance. The system is designed to compress variance across writers, campaigns, and approval chains.

That design choice puts Jasper in a different class from lightweight prompt tools. Copy.ai is often selected for drafting speed. Jasper is selected when a company needs controlled output at scale, especially across large teams where review load becomes the hidden cost center.

Jasper is a governance engine first

A technical buyer should read Jasper as a policy layer wrapped around generation. Brand Voice, Knowledge assets, Canvas, and Agents all serve the same function. They reduce the probability of off-brand text before a human reviewer sees it. In Algomizer terms, Jasper optimizes Semantic Density under constraint. It tries to keep output information-rich without letting every contributor redefine tone, claims, or terminology.

That matters in multi-region marketing orgs, regulated categories, and any environment with several approvers in the loop. The tool is less compelling for a solo operator chasing low-cost first drafts. Its value increases when the primary bottleneck is governance overhead.

Jasper also has a secondary GEO implication. Governed content usually produces cleaner entity consistency, more stable terminology, and fewer contradictory claims across pages. Those traits improve retrieval signatures for AI systems that synthesize answers from multiple sources. The underlying mechanics are explained in this guide on how to rank in ChatGPT.

Best fit: Multi-stakeholder marketing teams standardizing brand output

Primary strength: Brand-governed workflows that reduce editorial variance

Tradeoff: Enterprise-oriented packaging adds process and cost compared with lighter tools

Use Jasper if reviewer correction is consuming more time than idea generation. Buyers comparing plans can check Jasper’s pricing page, and the Flaex.ai Jasper page offers a quick product snapshot.

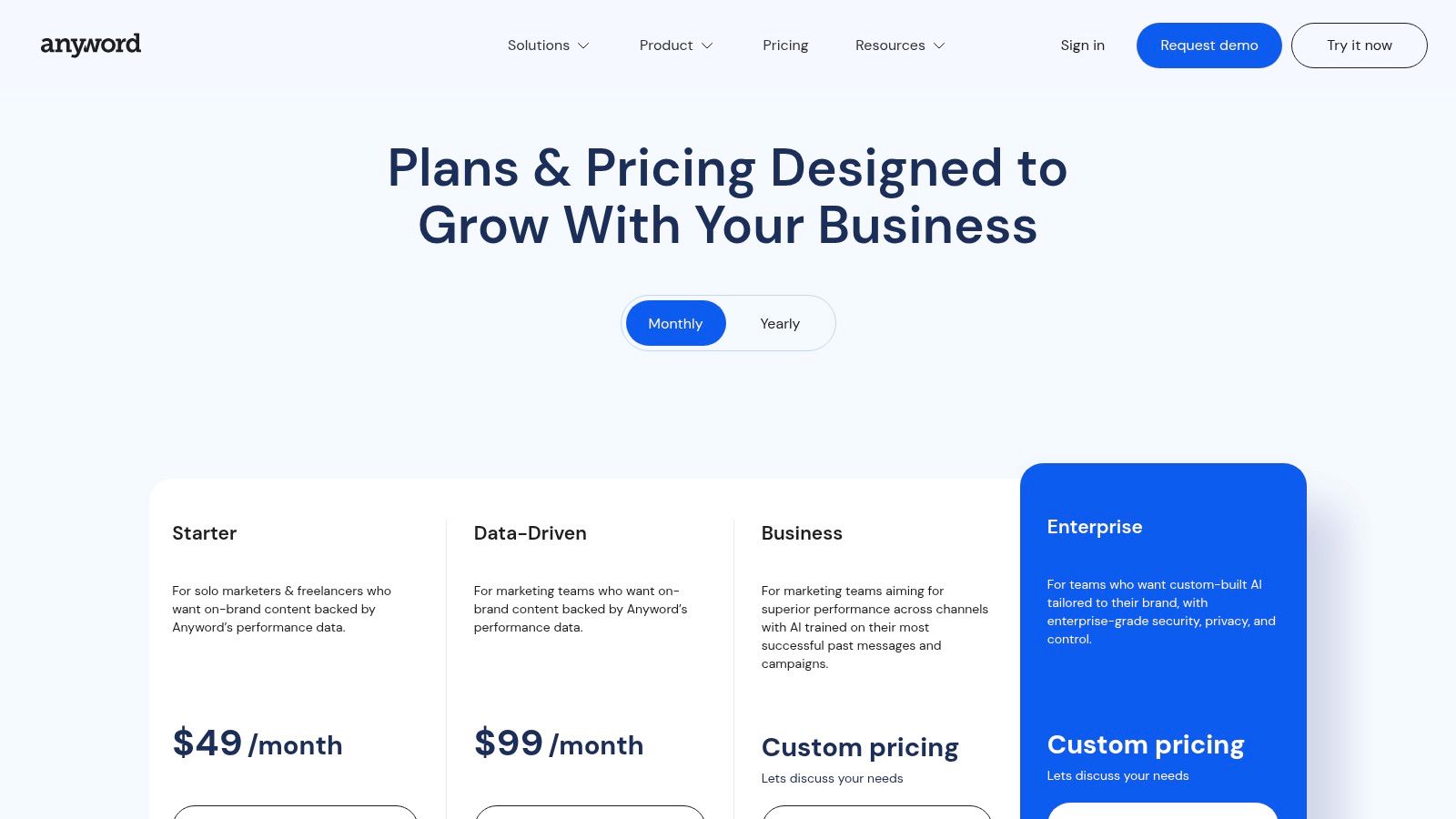

2. Anyword

Anyword sits in a narrower category than Copy.ai, and that is its advantage.

Through the Algomizer lens, Anyword’s output philosophy is conversion optimization. The product is built around a simple systems assumption. The highest-value copy asset is not the longest draft or the fastest draft. It is the variant with the strongest expected response from a defined audience segment.

That design choice changes how the tool should be evaluated. Copy.ai is often judged on ideation range and speed. Anyword should be judged on whether its scoring layer improves decision quality before budget is committed to ads, email sends, or landing-page tests. In model terms, it adds a prediction layer on top of generation. That makes it less of a writing assistant and more of a message selection engine.

Earlier source analysis in this article noted that Anyword differentiates through predictive performance scoring and audience-aware variant testing, with pricing structured to charge more for forecasting and data-driven features than for baseline text generation. That commercial logic matters. It signals where the vendor believes durable value resides.

Anyword is optimized for pre-launch message selection

The strongest use cases are paid acquisition, lifecycle marketing, and conversion-focused page testing. In those environments, the core problem is not producing another draft. The core problem is choosing which claim, angle, or CTA deserves exposure. Anyword addresses that problem directly by generating multiple versions, scoring them in context, and keeping brand inputs in the loop.

From an AI systems perspective, this creates a distinct operating profile. The platform reduces editorial randomness by constraining generation with performance priors. Algomizer classifies that as lower variance, higher decision utility output. The copy may be less surprising than what a pure ideation tool produces, but it is better aligned to channels where small wording changes affect spend efficiency.

One conclusion follows. Anyword is undervalued if a team treats it like a blog writer.

It is strongest where message failure has an immediate cost. Paid social teams, performance marketers, and operators responsible for landing-page yield get more from its scoring architecture than content teams measuring success by draft volume alone.

Three product characteristics reinforce that position:

Predictive scoring: The interface gives immediate feedback as copy changes, which shifts editing toward performance likelihood rather than stylistic preference.

Audience conditioning: Variants are shaped around segment-specific intent instead of a single generic prompt output.

Governed optimization: Brand rules remain active while the system tests for stronger conversion language.

The current packaging is listed on Anyword’s pricing page. For buyers comparing Copy.ai alternatives through the Algomizer framework, Anyword is the clearest example of a tool engineered for conversion optimization first, with generation serving the scoring system rather than the reverse.

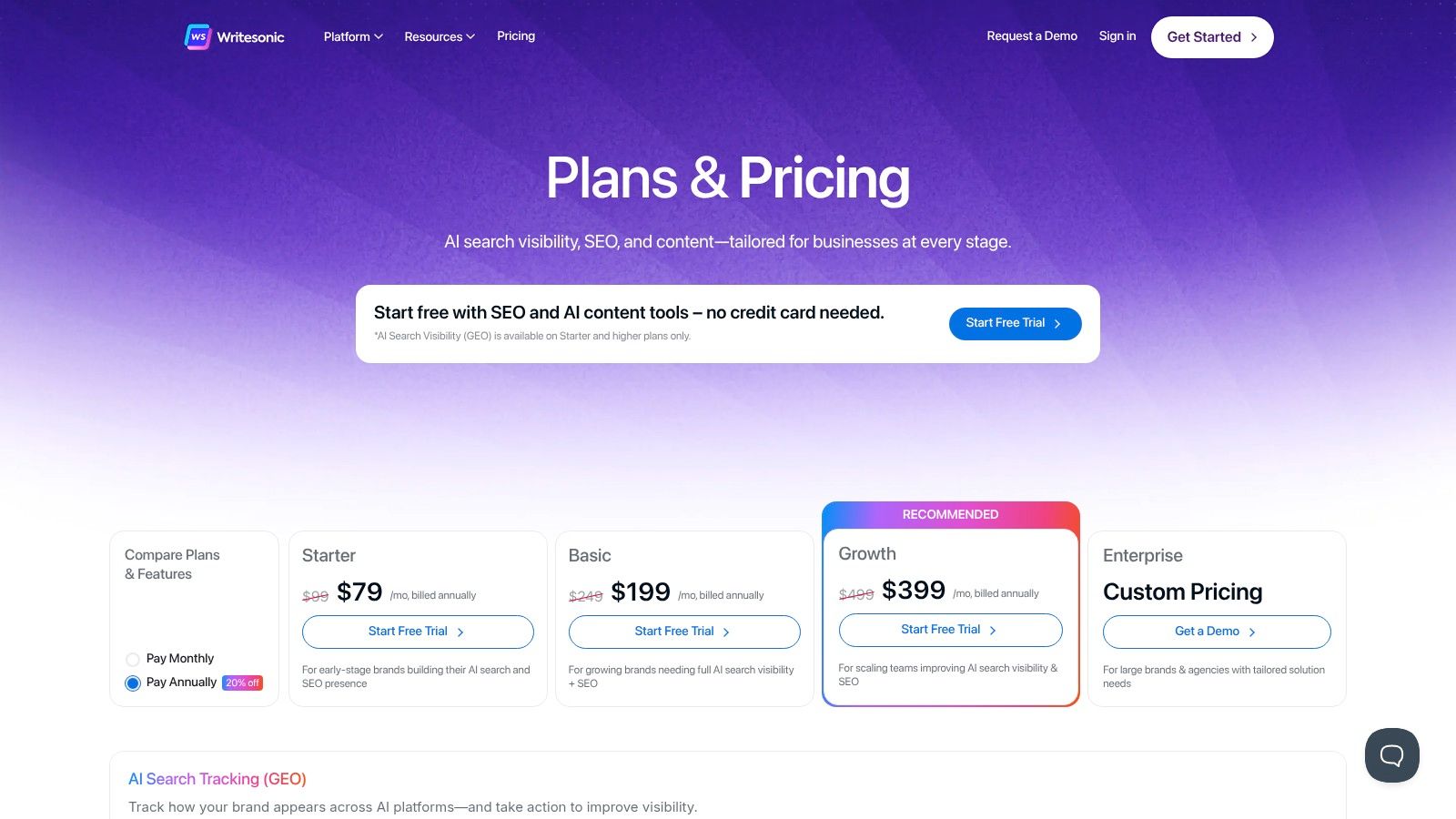

3. Writesonic

Writesonic is not the best Copy.ai alternative for every team. It is the one with the clearest thesis about where the category is going. Through the Algomizer lens, its output philosophy is Generative Engine Optimization first, with content generation acting as the production layer around that strategy.

That distinction matters. Some platforms are built to protect brand consistency. Others are built to increase conversion rates at the message level. Writesonic is built to improve retrieval presence across both search results and AI answer interfaces. Its product logic points toward query coverage, entity relevance, and answer-surface visibility, not just faster draft generation.

Algomizer classifies this as a high Semantic Density system. The platform combines writing, optimization, site auditing, and visibility tracking in one workflow, which reduces the gap between producing content and testing whether that content is being surfaced. For teams studying how large language model optimization changes content strategy, that integrated loop is its key differentiator.

Writesonic is engineered for retrieval performance

Writesonic’s value comes from stack compression. A marketing team can generate an article, refine it against search-oriented constraints, audit existing pages, and monitor AI visibility from the same operating surface. That creates a tighter feedback cycle than the classic toolchain of writer, SEO platform, spreadsheet, and manual prompt testing.

The non-obvious advantage is operational. Once SEO and GEO live inside the same system, content decisions shift from volume targets to surface-area management. Teams stop asking whether they published enough and start asking whether they covered the right entities, intent patterns, and answer formats.

Three product traits define that profile:

Integrated production and optimization: Drafting and search tuning happen in the same environment, which lowers coordination cost.

AI visibility orientation: The platform is designed around whether content appears across emerging answer surfaces, not only conventional rankings.

Broad execution range: It supports blog workflows, landing-page support, and e-commerce content, which makes it more useful for multi-format teams than a pure copy generator.

The tradeoff is complexity. A tool that spans writing, optimization, and visibility monitoring asks buyers to evaluate more than output quality alone. Procurement has to judge workflow fit, reporting needs, and whether the team will use the added retrieval-layer features.

The current plans and packaging are shown on Writesonic’s pricing page. Among copy ai alternatives, Writesonic stands out as a platform engineered to manage discoverability as a system, not just produce text on demand.

4. Writer enterprise

The common mistake in this category is treating enterprise AI as scaled-up copy generation. Writer is engineered around a different premise. Output must be governable before it is useful.

Through the Algomizer lens, Writer’s output philosophy is brand governance. That sounds narrower than conversion optimization or GEO, but it changes the entire system design. The product is built to constrain variance, document provenance, and route generation through policy layers that legal, compliance, and security teams can inspect.

That architecture matters because enterprise content failure rarely starts with bad prose. It starts with uncontrolled retrieval, inconsistent terminology, and weak approval logic. Writer addresses those failure modes directly with grounded generation, style enforcement, auditability, and administrative controls. Among copy ai alternatives, that makes it closer to a managed content operating system than a prompt-first writing tool.

Writer is optimized for low-variance generation

Writer fits organizations where every output carries institutional risk. Regulated industries are the clearest example, but the same logic applies to large B2B companies with dense product language, distributed teams, and strict review chains. In those environments, the highest-value feature is not speed. It is semantic control.

Its Knowledge Graph approach is especially important at the model layer. Grounding reduces drift by narrowing the system’s retrieval space to approved concepts, sources, and language patterns. That improves what we would call Semantic Density. More of the output is anchored to validated business meaning, and less is filled with statistically plausible but operationally risky filler.

A second-order effect shows up in search and answer-surface performance. Content that is traceable, terminology-stable, and citation-aware tends to create cleaner authority signals for downstream retrieval systems. The logic overlaps with LLMO methods for reducing retrieval ambiguity in AI-generated content.

Writer’s tradeoff is straightforward. It will feel restrictive to teams that prioritize campaign velocity, experimentation volume, or lightweight ideation. That is not a flaw in the product. It is a consequence of its design objective. Writer sacrifices some creative latitude to improve governance fidelity.

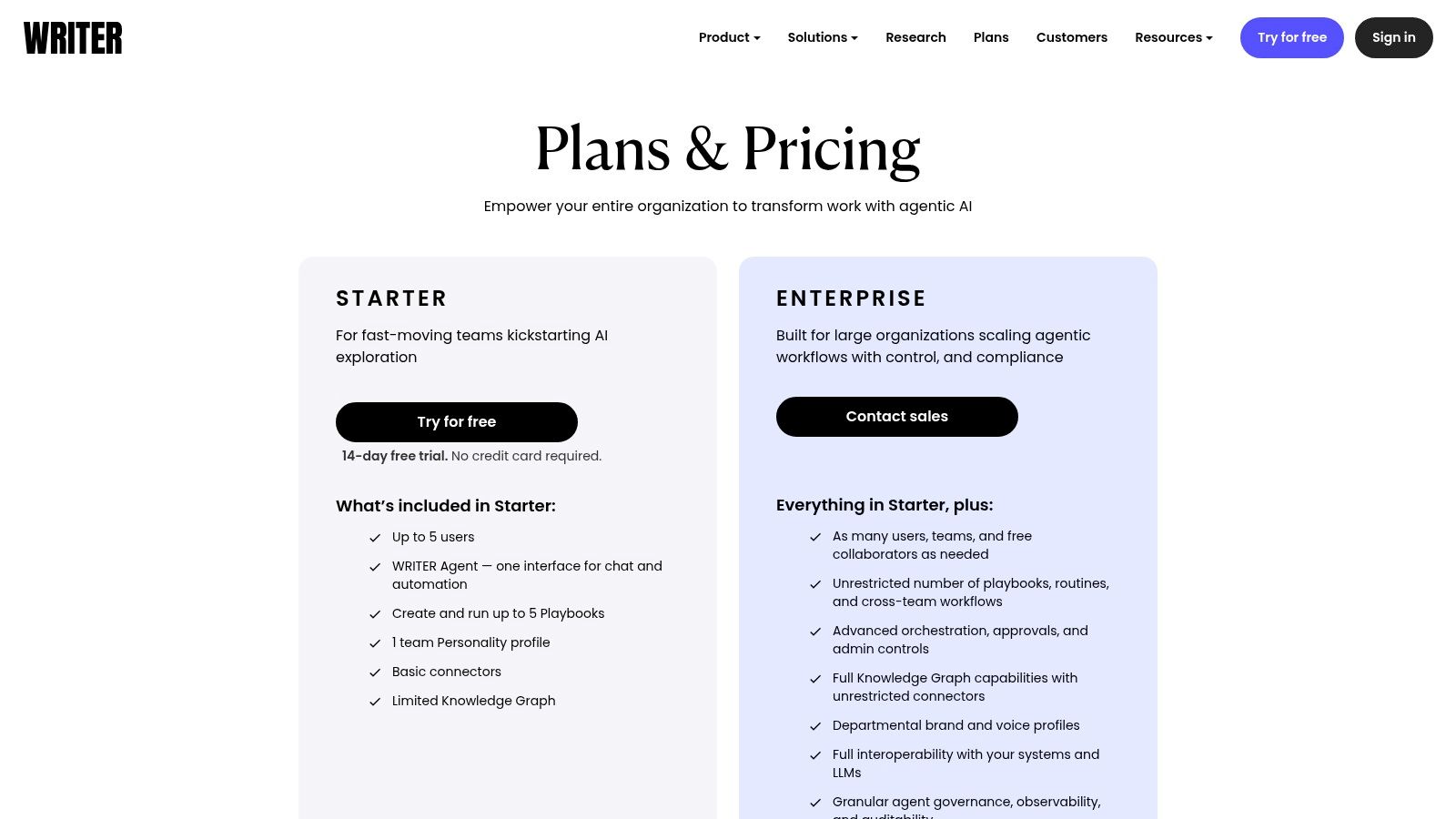

Pricing and packaging are outlined on Writer’s plans page. For buyers comparing copy ai alternatives through an Output Philosophy framework, Writer is the clear choice when procurement, risk, and brand consistency matter more than raw generation breadth.

5. Hypotenuse

Hypotenuse is not a generalist. It is a catalog-content machine shaped around product data, large inventories, and commerce workflows.

Its output philosophy is structured enrichment. The platform is strongest when source data already exists in PIM, ERP, storefront, or inventory systems and the marketing team needs to convert that structured input into product descriptions, landing assets, or SEO-supporting catalog content. That is a very different job from campaign copy.

This distinction matters because many copy ai alternatives underperform when they are forced into high-volume product operations. They can draft prose, but they can’t maintain consistency across thousands of item-level outputs without a strong data-handling layer.

Hypotenuse is tuned for catalog-scale content systems

Bulk generation and ecommerce integrations are the core. Brand voice controls matter here, but the primary technical value is repeatability. Teams with large product sets need outputs that map to attributes, variants, and multilingual requirements without introducing style drift on every page.

Hypotenuse fits retailers, marketplaces, and manufacturers better than broad writing suites do. It also supports blog workflows, but that isn’t the primary reason to buy it. The main reason is operational efficiency inside commerce content supply chains.

Best fit: Ecommerce teams with large product catalogs.

Primary strength: Bulk, structured, repeatable content generation.

Tradeoff: Many advanced commerce workflows sit higher in the pricing stack.

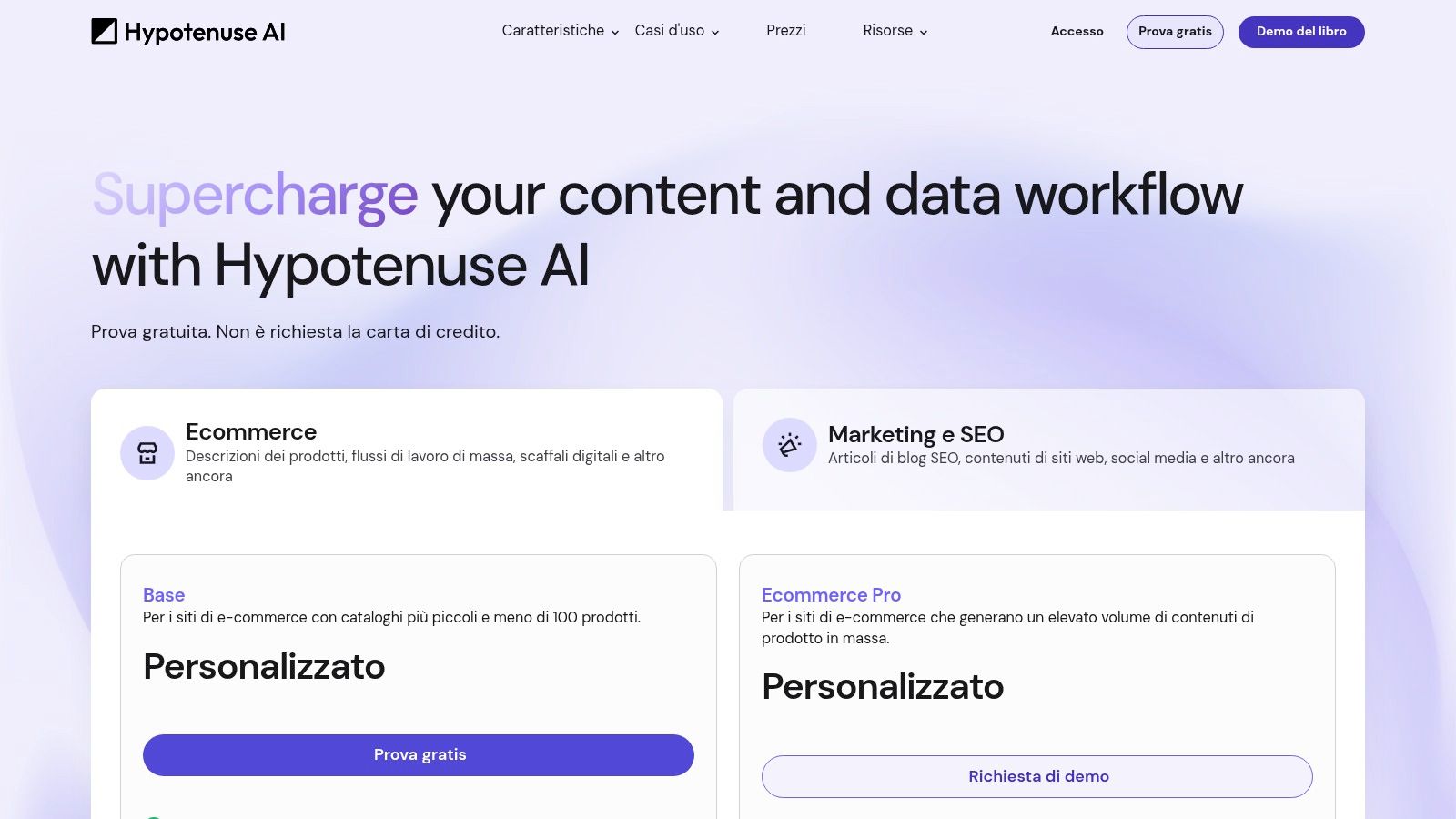

The product’s pricing and packaging are listed on Hypotenuse pricing. Among copy ai alternatives, Hypotenuse is one of the clearest examples of specialization beating generic drafting breadth.

6. Rytr

Rytr is useful precisely because many teams overbuy AI writing software.

Through the Algomizer lens, Rytr’s Output Philosophy is prompt-level execution. It is built for fast text generation, not systematized content operations. That distinction matters. A tool optimized for low-latency drafting will usually trade away governance layers, workflow depth, and model conditioning controls that larger teams need.

Rytr performs best in short-form environments where speed has higher value than process integrity. Solo founders, freelancers, and small marketing teams often need emails, product snippets, ad copy, captions, or first-pass ideas in minutes. Rytr fits that use case because the interface is simple, the prompt structure is light, and the cognitive load is low.

The downside is architectural, not cosmetic. As noted earlier, budget-oriented tools in this class tend to lose ground when content moves into cross-functional review, CRM-connected execution, or multi-stakeholder brand control. Rytr can help produce copy. It does not function as a content control layer.

Rytr is optimized for drafting velocity, not operational maturity

That makes Rytr a legitimate Copy.ai alternative for a narrow buying profile. If the selection criteria center on affordability, ease of use, and fast short-form output, Rytr is rational. If the criteria shift toward brand governance, conversion experimentation, or GEO readiness, the platform’s limits appear quickly.

In Semantic Density terms, Rytr is tuned for breadth across common formats rather than depth within any one strategic workflow. You get many templates and quick iteration. You do not get the higher-order infrastructure that turns generated text into a governed asset class across teams and channels.

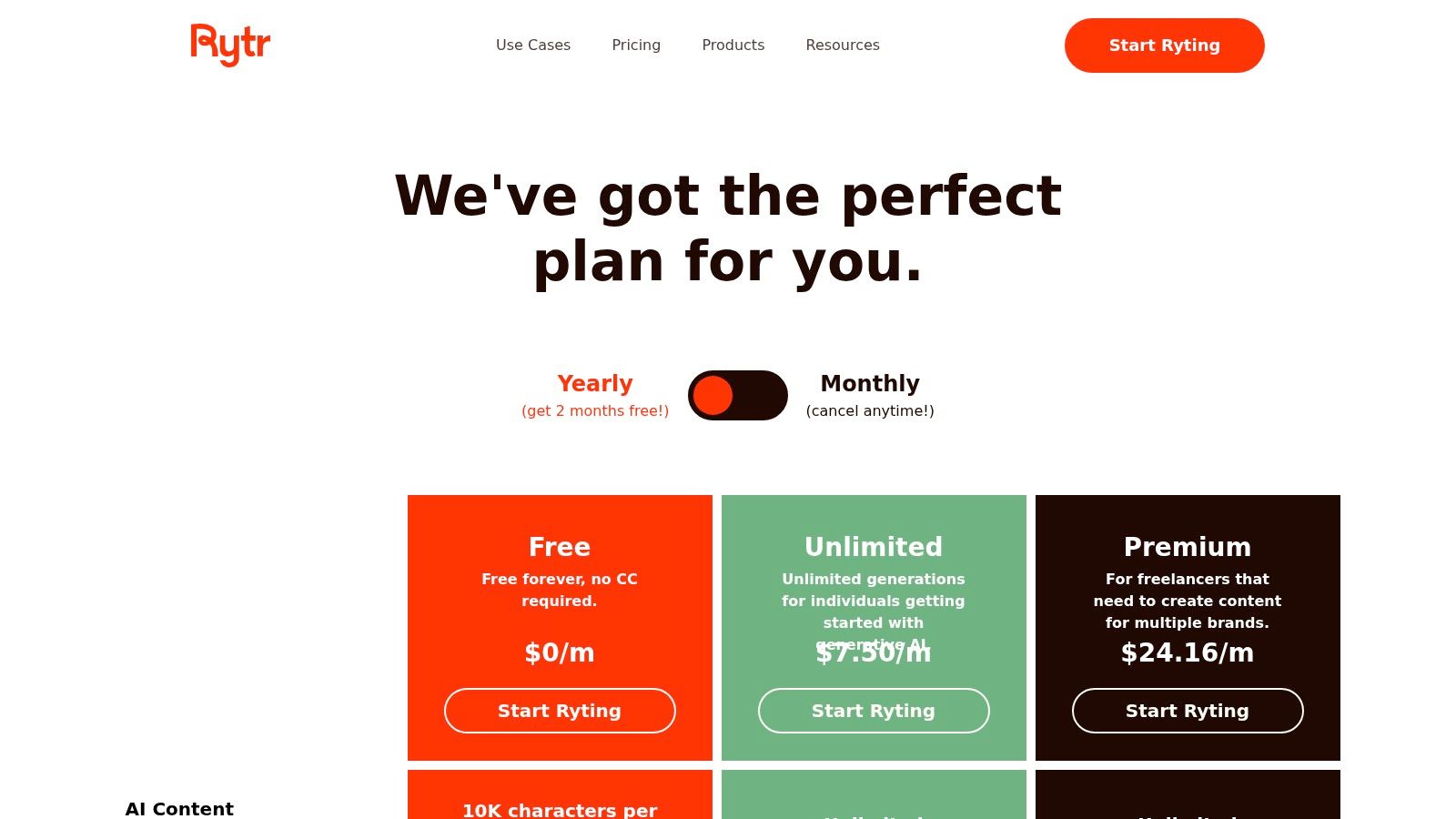

Its current plans are listed on Rytr pricing. Among copy ai alternatives, Rytr sits in the utility tier. It is best treated as a low-cost drafting assistant for short-form execution, not as a future-facing content system.

7. Scalenut

Scalenut is more important than its market share suggests. Through the Algomizer lens, its Output Philosophy is not brand governance or generic copy generation. It is discoverability engineering. That places it in a different strategic bucket from many Copy.ai alternatives.

The distinction matters at the model-workflow level. Scalenut extends beyond drafting into keyword clustering, prompt discovery, optimization, internal linking, publishing, and AI visibility tracking. In Semantic Density terms, that means the product is designed to improve how content is structured for retrieval and resurfacing, not just how quickly text is produced.

Scalenut is built for retrieval-oriented content operations

That makes Scalenut one of the clearer GEO-facing options in this category. Its product logic assumes a new evaluation standard. Content now needs to perform in two systems at once: traditional search indexing and AI-mediated answer generation. Tools built only for surface-level copy variation usually underperform once that requirement appears.

Scalenut is trying to operationalize that shift. GEO creation, prompt tracking, clustering, internal linking, and auto-publish support point to a workflow centered on recall, citation likelihood, and topic coverage. From an AI systems perspective, that is a meaningful difference. The platform behaves less like a writing assistant and more like an optimization layer for search and answer surfaces.

Best fit: SEO and content teams measuring success by search visibility and AI-answer inclusion.

Primary strength: A product architecture aligned with GEO-style content workflows.

Tradeoff: Plan limits and packaging can make the value equation less obvious at first glance.

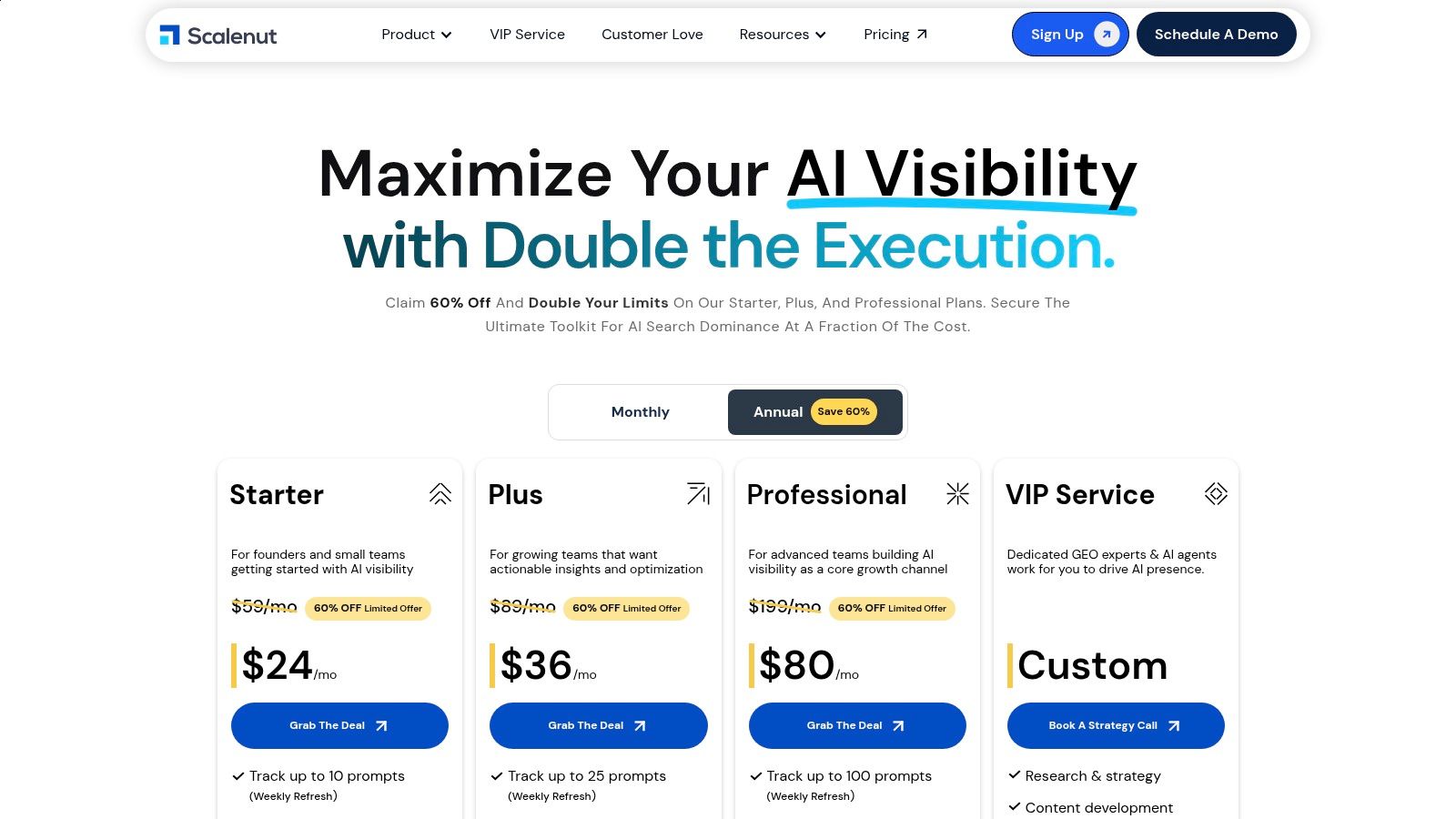

The platform’s current commercial options appear on Scalenut pricing. Among Copy.ai alternatives, Scalenut stands out for one reason. It has a defined theory of how content gets found.

8. Frase

Frase matters for a different reason than many Copy.ai alternatives. Its value is not raw text generation volume. Through the Algomizer lens, its output philosophy is retrieval preparation. The product is designed to help teams build content from search evidence outward, which makes it more relevant for GEO workflows than generic copy tools that start with drafting and stop there.

As noted earlier, Frase is one of the clearer examples of a platform tying SEO research to AI-era content execution. That distinction shows up at the workflow level. SERP analysis, competitor summaries, content briefs, optimization guidance, and AI-assisted drafting all sit inside the same system. From an AI systems perspective, that reduces context loss between research and production, which usually improves Semantic Density and topical alignment.

Frase prioritizes research-conditioned output

That is the strategic case for Frase. It helps content teams convert search context into structured content decisions without adding the governance overhead of an enterprise writing stack.

Its AI Agent, content brief generation, optimization tools, and visibility features make the platform a strong fit for operators managing topic clusters, editorial throughput, and answer-surface discoverability in one place. Frase is less about brand control than Writer Enterprise and less about pure scoring discipline than Surfer. Its differentiation is process compression. Research, drafting, and optimization happen in a compact loop that supports conversion-ready and retrieval-friendly output at the same time.

Operational takeaway: Frase fits teams that already know the categories they want to win and need a tighter system for producing high-coverage, search-informed content with clear GEO potential.

Its current offer is available on Frase pricing. In strategic terms, Frase is one of the more credible options for teams choosing a tool based on output philosophy rather than headline feature count.

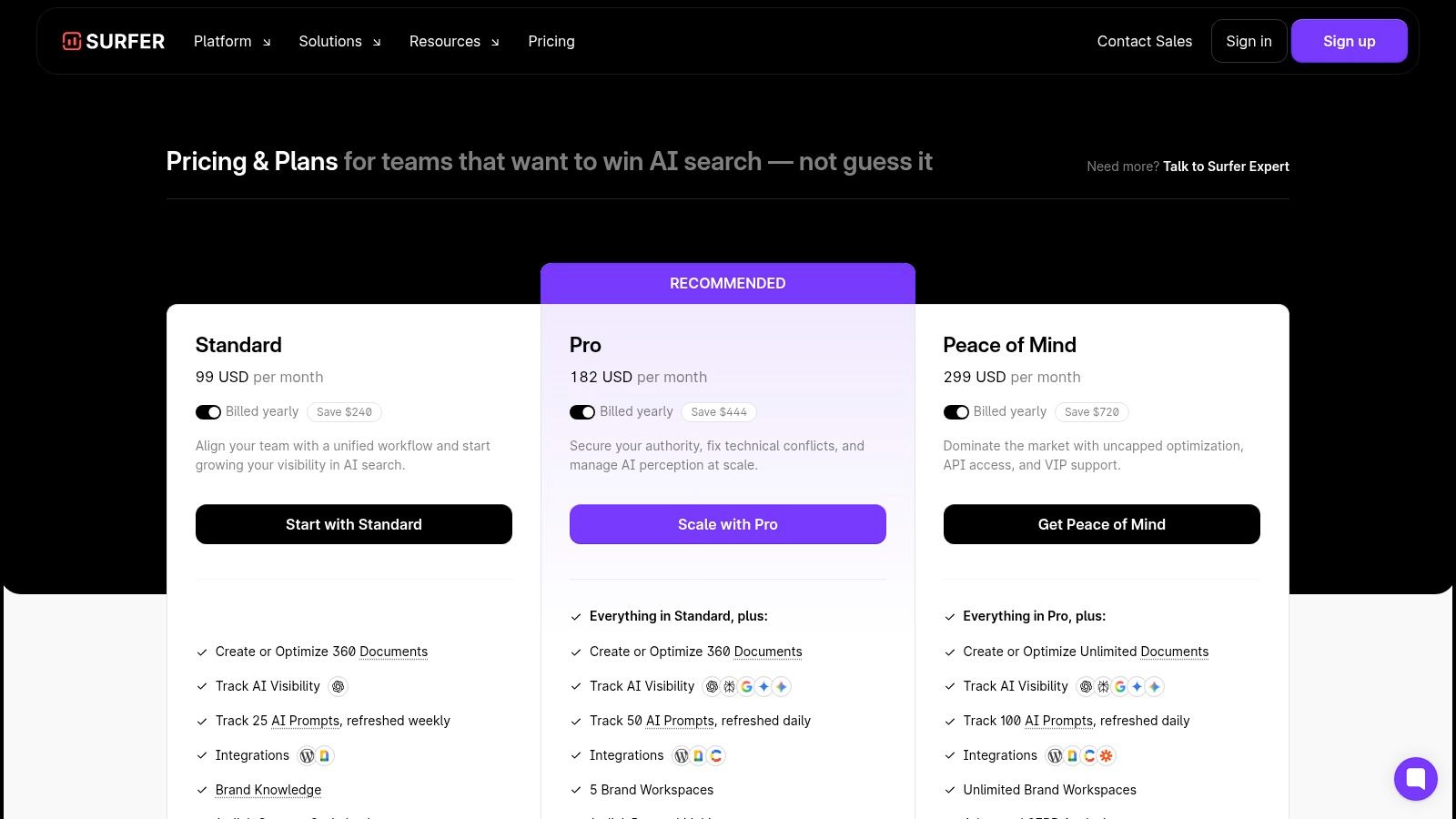

9. Surfer

Surfer is the clearest example of a tool whose value comes from constraint, not generation volume.

Through the Algomizer lens of Output Philosophy, Surfer is engineered for search-fit governance. Its core function is to score whether a draft matches the semantic structure, term distribution, and topic breadth already winning in the SERP. That makes it materially different from Copy.ai and from alternatives built around ideation or brand workflow. Surfer is a control system for relevance calibration.

That distinction matters because many content teams no longer fail at drafting. They fail at calibration. A page can read well and still underperform if its Semantic Density is thin, its entity coverage is incomplete, or its structure diverges from the retrieval patterns search systems reward. Surfer addresses that failure mode directly.

Surfer is built for optimization discipline, not writing breadth

Content Editor, topical mapping, cannibalization detection, and AI visibility monitoring give operators a repeatable way to evaluate whether content is aligned before and after publication. In practical terms, Surfer helps standardize editorial decisions across portfolios, which is difficult to do with a general-purpose writing assistant. Teams managing topic clusters often pair that workflow with a broader content hub strategy for SEO, where consistency across related pages matters as much as the quality of any single article.

Its Output Philosophy is not brand governance like Writer Enterprise, and it is not research compression like Frase. Surfer is conversion-adjacent only in the indirect sense that stronger retrieval alignment can raise qualified traffic. The product is built first for GEO and SEO operators who want measurable optimization controls, not for teams chasing stylistic flexibility.

That focus also explains the tradeoff. Surfer is easier to justify when a company already has writers, editors, or subject matter experts and needs a scoring layer around them. Teams looking for a broad creative assistant may find it narrow. Teams responsible for ranking performance usually see that narrowness as design discipline.

Best fit: SEO and GEO teams that need standardized optimization workflows across multiple pages or properties.

Primary strength: High-control environment for semantic coverage, content scoring, and visibility tracking.

Tradeoff: Lower utility for teams whose main problem is copy generation rather than retrieval alignment.

The live plans are published on Surfer pricing. For buyers comparing copy ai alternatives by Output Philosophy instead of feature volume, Surfer remains one of the strongest choices for search-governed content operations.

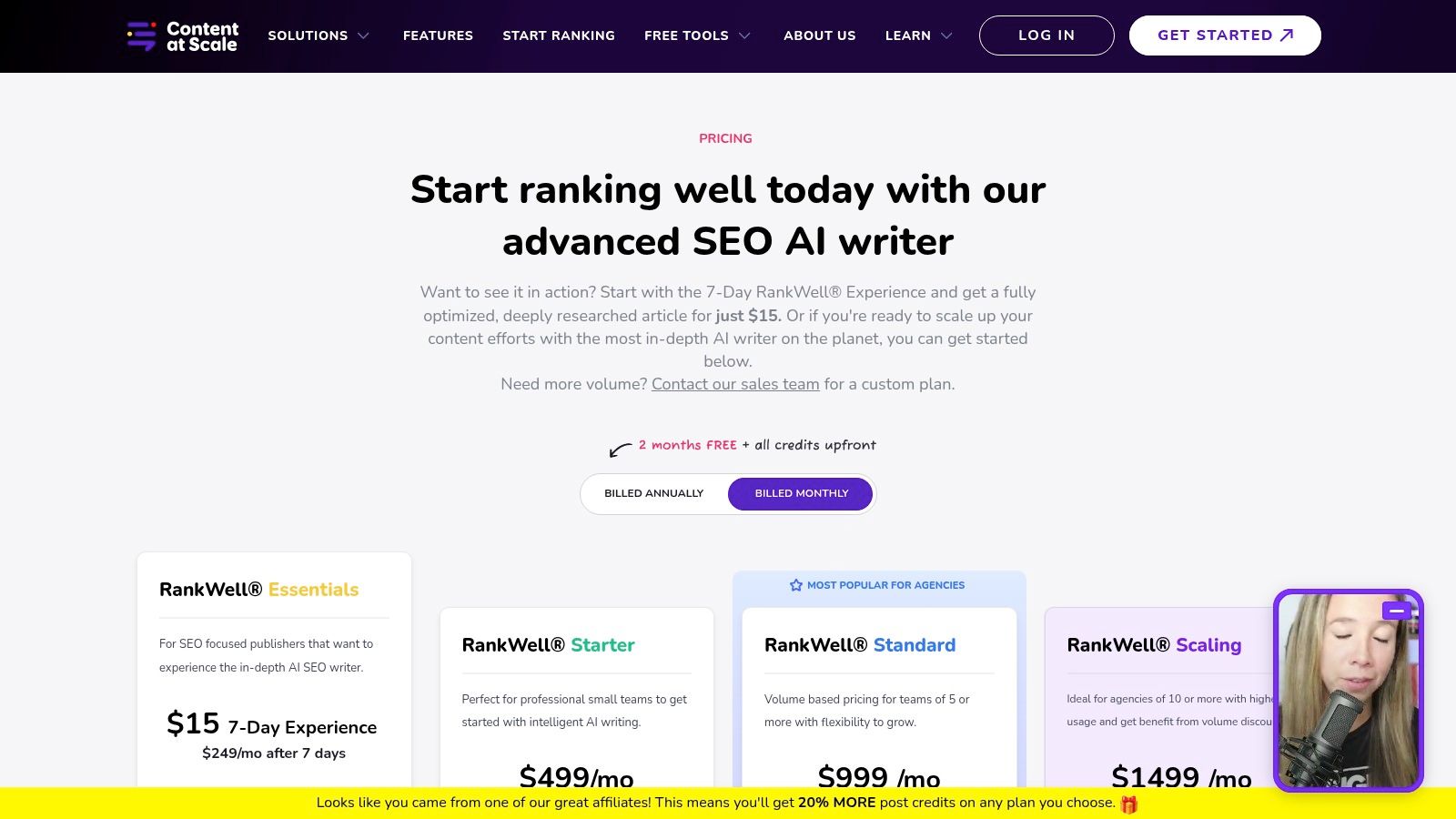

10. Content at Scale

Content at Scale is built for one thing. High-volume long-form production with integrated optimization and agency-friendly workflow layers.

Its output philosophy is throughput with post-processing control. The platform emphasizes research, outlining, drafting, optimization, and humanization in a single flow. That package isn’t ideal for every team, but it is clearly aimed at publishers, agencies, and enterprise content operations that need volume plus review surfaces.

This makes it different from tools that lead with short-form copy, ad generation, or conversion scoring. Content at Scale is primarily a long-form manufacturing environment.

Content at Scale is built for industrial long-form throughput

The strongest reason to consider it is process compression. Agencies and in-house editorial teams often juggle research tools, writing tools, optimization tools, and brand review steps in separate systems. Content at Scale tries to collapse those stages.

That is strategically useful when a team operates content hubs rather than isolated articles. Long-form authority compounds through internal linkage, structured topic ownership, and publishing rhythm. Those mechanics map closely to this framework for building content hubs for SEO.

The current offer is available on Content at Scale pricing. Among copy ai alternatives, this is the option for buyers who care less about ad copy and more about industrialized editorial output.

Top 10 Copy.ai Alternatives Comparison

Tool | Core / Key Features (✨) | Quality / Performance (★) | Value & Pricing (💰) | Target Audience (👥) | GEO/AEO & AI Visibility Strength (🏆) |

|---|---|---|---|---|---|

Jasper | Brand voice + Canvas editor, Agents, enterprise security ✨ | ★★★★☆, strong governance | 💰 Mid–High; enterprise plans via sales | 👥 Marketing teams & enterprises | 🏆 Good content governance; limited native GEO focus |

Anyword | Predictive scoring, real‑time benchmarks, data ingestion ✨ | ★★★★☆, conversion‑driven | 💰 Mid; unlimited gen but seats add cost | 👥 Performance marketers & ad teams | 🏆 Strong for conversion metrics; modest GEO overlap |

Writesonic | Article writer, SEO tools, AI visibility tracking across models ✨ | ★★★★, broad toolset | 💰 Mid; plan complexity varies | 👥 SMBs & agencies needing content + GEO | 🏆 Includes GEO tracking; depth varies by plan |

Writer (Enterprise) | Playbooks, Knowledge Graph, agentic workflows, Palmyra LLMs ✨ | ★★★★★, enterprise‑grade governance | 💰 High; sales engagement required | 👥 Large orgs, regulated industries | 🏆 Excellent governance; GEO features secondary |

Hypotenuse | Bulk product descriptions, PIM/ERP integrations, multilingual ✨ | ★★★★, ecommerce focus | 💰 Mid; word‑based subscriptions | 👥 Retailers, marketplaces, catalogs | 🏆 Excellent for product content; limited AI‑answer tooling |

Rytr | 40+ short‑form use cases, tone matching, low entry price ✨ | ★★★, solid for short copy | 💰 Low; budget‑friendly | 👥 Individuals & small teams | 🏆 Cost‑effective; minimal GEO/AEO capability |

Scalenut | GEO content creation, AI visibility tracking, prompt discovery ✨ | ★★★★, GEO‑forward toolkit | 💰 Mid; good feature value but tiered | 👥 Teams optimizing AI answers & visibility | 🏆 Strong GEO roadmap; depth gated by tiers |

Frase | AI Agent (80+ skills), SERP research, visibility tracking ✨ | ★★★★, research + tracking | 💰 Accessible; trial available | 👥 Content teams, SMBs | 🏆 Clear GEO/AEO emphasis at accessible price |

Surfer | Content Editor, topical maps, AI Tracker for multiple models ✨ | ★★★★, mature optimization tools | 💰 Mid–High as seats/features scale | 👥 SEO teams & agencies | 🏆 Mature AI visibility modules; scales well |

Content at Scale | Research→draft→optimize pipeline, undetectable/humanized output ✨ | ★★★★, high‑volume long‑form | 💰 High; agency/publisher pricing | 👥 Agencies, publishers, enterprises | 🏆 Excellent for scale long‑form; GEO not core focus |

Final Thoughts

The useful question is not which Copy.ai alternative produces the flashiest draft. The useful question is which system fails least under real operating constraints.

Through the Algomizer Output Philosophy lens, the market separates cleanly into six engineering priorities. Jasper is tuned for brand governance. Anyword is tuned for conversion optimization. Writesonic, Scalenut, Frase, and Surfer are increasingly tuned for Generative Engine Optimization, with workflows shaped around retrieval structure, topical coverage, and AI-answer visibility. Writer is tuned for institutional control. Hypotenuse is tuned for catalog consistency. Rytr is tuned for low-friction execution. Content at Scale is tuned for throughput at volume.

That distinction matters because content teams no longer buy text generation in isolation. They buy variance reduction. A platform earns its place if it constrains the failure mode that matters most to the team using it. In regulated environments, that failure mode is governance drift. In performance marketing, it is conversion inefficiency. In GEO programs, it is retrieval failure. In ecommerce, it is catalog inconsistency across thousands of SKUs.

This is the deeper pattern behind the category. General-purpose writers looked strong when the problem was draft speed. They weaken as the problem shifts toward system fit. Once content must survive approval chains, preserve brand semantics, map to search intent, or appear inside AI-generated answers, model orchestration matters more than raw fluency. Semantic Density, prompt control, workflow memory, and policy enforcement start to separate tools that look similar in a homepage comparison.

Specialists are gaining ground for that reason. They compress uncertainty in a narrow but expensive layer of the workflow. That is why brand-led teams tend to move toward Jasper or Writer, performance-led teams toward Anyword, GEO-led teams toward Frase, Scalenut, Surfer, or Writesonic, catalog-heavy teams toward Hypotenuse, and high-volume publishing operations toward Content at Scale.

Price follows the same logic. Buyers resist premium pricing when a product only generates acceptable prose. They accept it when the system improves control, predictability, or discoverability. The spend decision is rarely about tokens. It is about whether the software reduces editorial rework, compliance risk, missed conversions, or invisible content.

The practical conclusion is straightforward. Do not replace Copy.ai with another writing tool in the abstract. Choose the platform whose output philosophy matches your dominant constraint. Teams that make that choice explicitly get a tighter content system, lower operational drift, and clearer ROI.

Algomizer helps brands win visibility inside AI-generated answers across ChatGPT, Claude, Gemini, Perplexity, and other LLMs. Teams that need more than copy generation can book a call with Algomizer to get a complimentary visibility assessment, retrieval-focused content strategy, and enterprise-ready GEO execution.